I dug this quick tour from Robert Hranitzky, giving a peek behind the scenes on a recent project:

Category Archives: After Effects

Mograph: Canva Calls In The Cavalry

“Wow, that’s some really sharp After Effects work,” I thought last year, when my wife showed me some animation her Airbnb colleague had created. But nope—the work came straight out of Canva.

Not content to chill with their surprisingly capable foundation, Canva is continuing to build out the “Creative Operating System” and has announced the acquisition of up-and-coming 2D animation tool Cavalry:

In their blog post they seem pretty adamant that the acquisition won’t result in dumbing down the core app:

Built for professional motion designers

Cavalry earned its place in the motion design world by doing something different. Its procedural, systems-based approach prioritises flexibility, repeatability, and performance. It wasn’t built as a simplified alternative; it was built specifically for professional motion designers and the complex workflows they rely on. That professional focus remains central.

We’ve invested in Cavalry because of its depth as a professional-grade motion tool. The goal isn’t to simplify what makes it powerful, but to support and strengthen it. Professional motion design demands precision, flexibility, and tools that can scale across complex projects.

Much as with their acquisition of Affinity, however, I’d fully expect Canva to integrate underlying tech into the core design platform, radically simplifying the interface to it—including by providing agentic and chat-based touchpoints.

As with the myriad node-based systems that sprung up last year, I wouldn’t expect most people to ever see or touch the underlying data structures. Rather, what’s essential is that the main tool can understand & modify them, so that it can deliver brilliant results at scale. That necessitates a very approachable, and totally complementary, UX.

I’m excited to see what’s next!

The Rive founders talk interactive animation

Having gotten my start in Flash 2.0 (!), and having joined Adobe in 2000 specifically to make a Flash/SVG authoring tool that didn’t make me want to walk into the ocean, I felt my cold, ancient Grinch-heart grow three sizes listening to Guido and Luigi Rosso—the brother founders behind Rive—on the School of Motion podcast:

[They] dig into what makes this platform different, where it’s headed, and why teams at Spotify, Duolingo, and LinkedIn are building entire interactive experiences with it!

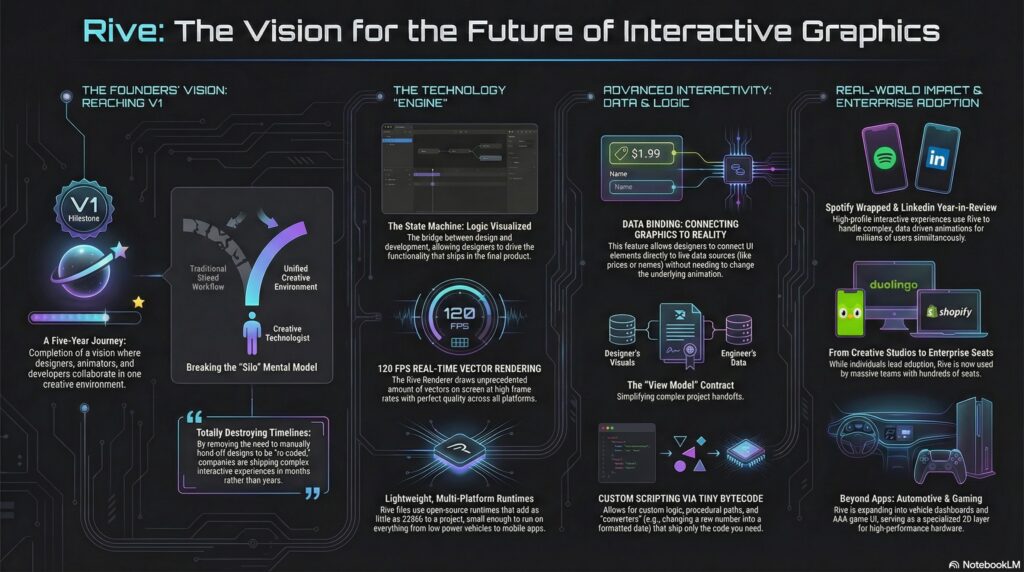

Here’s a NotebookLM-made visualization of the key ideas:

Table of contents:

Reflecting on 2025: A Year of Milestones 00:24

The Challenges of a Three-Sided Marketplace 02:58

Adoption Across Designers, Developers, and Companies 04:11

The Evolution of Design and Development Collaboration 05:46

The Power of Data Binding and Scripting 07:01

Rive’s Impact on Product Teams and Large Enterprises 09:18

The Future of Interactive Experiences with Rive 12:36

Understanding Rive’s Mental Model and Scripting 24:32

Comparing Rive’s Scripting to After Effects and Flash

The Vision for Rive in Game Development 31:30

Real-Time Data Integration and Future Possibilities 40:26

Spotify Wrapped: A Showcase of Rive’s Potential 42:08

Breaking Down Complex Experiences 46:18

Creative Technologists and Their Impact 51:07

The Future of Rive: 3D and Beyond 59:30

Opportunities for Motion Designers with Rive 1:11:38

Beautiful new AI mograph explorations

Check out this new work from Alex Patrascu. As generative video tools continue to improve in power & precision, what’ll be the role of traditional apps like After Effects? ¯\_(ツ)_/¯

Liquid Logos

With the right prompt, anything is possible with AI video today.

Images: OpenAI Sora

Animation: Kling 2.1

Editing: CapCut pic.twitter.com/yOgDEwahCo— Alex Patrascu (@maxescu) September 5, 2025

Quick tip:

If you want to utilize the full power of Start/End frames (Kling or Hailuo), you can make the first frame empty with tour desired color and describe what happens until the last frame is revealed.

Super powerful: pic.twitter.com/xTaQsB06Kt

— Alex Patrascu (@maxescu) September 7, 2025

A love letter to splats

Paul Trillo relentlessly redefines what’s possible in VFX—in this case scanning his back yard to tour a magical tiny world:

Getting my hands dirty with 30 Gaussian splats scanned in my garden. Is this the most splats ever in a single shot?

Made with the support of @Lenovo @Snapdragon and the new Gaussian Splatting plugin by @irrealix pic.twitter.com/ezXo6MMnQi

— Paul Trillo (@paultrillo) October 3, 2024

Here he gives a peek behind the scenes:

How I created the love letter to the garden and bashed together 30 different Gaussian splats into a single scene pic.twitter.com/OKxDFtK8uE

— Paul Trillo (@paultrillo) October 18, 2024

And here’s the After Effects plugin he used:

Step (and fly) into Spike Jonze’s Kenzo World

If you never see the use of After Effects in this delightfully madcap vid—well, that’s exactly as it should be. Apparently the filmmakers were featured in an Adobe trade show booth after it was released.

In any event, go nuts, Margaret Qualley!

Newton promises fun physics-based animation for After Effects

I haven’t gotten to try it out yet, but Newton looks like a lot of fun:

“Hundreds of Beavers”: A bizarre, AE-infused romp

I haven’t yet seen Hundreds of Beavers, but it looks gloriously weird:

I particularly enjoyed this Movie Mindset podcast episode, which in part plays as a fantastic tribute to the power of After Effects:

We sit down with Mike Cheslik, the director of the new(ish) silent comedy action farce Hundreds of Beavers. We discuss his Wisconsin influences, ultra-DIY approach to filmmaking, making your film exactly as stupid as it needs to be, and the inherent humor of watching a guy in a mascot costume get wrecked on camera.

After Effects + Midjourney + Runway = Harry Potter magic

It’s bonkers what one person can now create—bonkers!

I edited out ziplines to make a Harry Potter flying video, added something special at the end

byu/moviemaker887 inAfterEffects

I took a video of a guy zip lining in full Harry Potter costume and edited out the zip lines to make it look like he was flying. I mainly used Content Aware Fill and the free Redgiant/Maxon script 3D Plane Stamp to achieve this.

For the surprise bit at the end, I used Midjourney and Runway’s Motion Brush to generate and animate the clothing.

Trapcode Particular was used for the rain in the final shot.

I also did a full sky replacement in each shot and used assets from ProductionCrate for the lighting and magic wand blast.

[Via Victoria Nece]

Old-timey Fast & Furious

Fun stuff from Red Giant:

AE + GPT: Good for you, good for me

Check out my teammate CJ’s exploration around using ChatGPT to produce expression code for use in After Effects:

Adobe announces new Firefly plans for video

Our friends in Digital Video & Audio have lots of interesting irons in the fire!

From the team blog post:

To start, we’re exploring a range of concepts, including:

- Text to color enhancements: Change color schemes, time of day, or even the seasons in already-recorded videos, instantly altering the mood and setting to evoke a specific tone and feel. With a simple prompt like “Make this scene feel warm and inviting,” the time between imagination and final product can all but disappear.

- Advanced music and sound effects: Creators can easily generate royalty-free custom sounds and music to reflect a certain feeling or scene for both temporary and final tracks.

- Stunning fonts, text effects, graphics, and logos: With a few simple words and in a matter of minutes, creators can generate subtitles, logos and title cards and custom contextual animations.

- Powerful script and B-roll capabilities: Creators can dramatically accelerate pre-production, production and post-production workflows using AI analysis of script to text to automatically create storyboards and previsualizations, as well as recommending b-roll clips for rough or final cuts.

- Creative assistants and co-pilots: With personalized generative AI-powered “how-tos,” users can master new skills and accelerate processes from initial vision to creation and editing.

A pair of cute Firefly animations

O.G. animator Chris Georgenes has been making great stuff since the 90’s (anybody else remember Home Movies?), and now he’s embracing Adobe Firefly. He’s using it with both Adobe Animate…

…and After Effects:

Generative dancing about architecture

Paul Trillo is back at it, extending a Chinese restaurant via Stable Diffusion, After Effects, and Runway:

Elsewhere, check out this mutating structure. (Next up: Falling Water made of actual falling water?)

Tom Cruise -> Raptors!

Hah—this kidding-not-kidding piece from Red Giant is pretty great:

The @ButWithRaptors account is full of wonderful silliness:

AE + DALL•E = Concept car madness

More wildly impressive inpainting & animation from Paul Trillo:

More DALL•E + After Effects magic

Creator Paul Trillo (see previous) is back at it. Here’s new work + a peek into how it’s made:

Animated magic made via DALL•E + After Effects

😮

Frame.io is now available in Premiere & AE

To quote this really cool Adobe video PM who also lives in my house 😌, and who just happens to have helped bring Frame.io into Adobe,

Super excited to announce that Frame.io is now included with your Creative Cloud subscription. Frame panels are now included in After Effects and Premiere Pro. Check it out!

Take advantage of the industry's most powerful video review and collaboration tools all in one place. Introducing https://t.co/JdJeu2YuK6 for Creative Cloud – now included in #PremierePro and #AfterEffects. https://t.co/5xPF0xLYjN pic.twitter.com/aqolPm90MZ

— Adobe Video & Motion (@AdobeVideo) April 12, 2022

From the integration FAQ:

Frame.io for Creative Cloud includes real-time review and approval tools with commenting and frame-accurate annotations, accelerated file transfers for fast uploading and downloading of media, 100GB of dedicated Frame.io cloud storage, the ability to work on up to 5 different projects with another user, free sharing with an unlimited number of reviewers, and Camera to Cloud.

A modern take on “Take On Me”

Back in 2013 I found myself on a bus full of USC film students, and I slowly realized that the guy seated next to me had created the Take On Me vid. Not long after I was at Google & my friend recreated the effect in realtime AR. Perhaps needless to say, they didn’t do anything with it. ¯\_(ツ)_/¯

In any event, now Action Movie Dad Daniel Hashimoto has created a loving homage as a tutorial video (!).

Here’s the full-length version:

Special hat tip on the old CoSA vibes:

Say it -> Select it: Runway ML promises semantic video segmentation

I find myself recalling something that Twitter founder Evan Williams wrote about “value moving up the stack“:

As industries evolve, core infrastructure gets built and commoditized, and differentiation moves up the hierarchy of needs from basic functionality to non-basic functionality, to design, and even to fashion.

For example, there was a time when chief buying concerns included how well a watch might tell time and how durable a pair of jeans was.

Now apps like FaceTune deliver what used to be Photoshop-only levels of power to millions of people, and Runway ML promises to let you just type words to select & track objects in video—using just a Web browser. 👀

New eng & marketing opportunities in Adobe video

Come join my wife & her badass team!

- Senior Software Engineer — Video Acceleration Platform (San Jose, USA)

- Senior Software Engineer — Cloud Video (San Jose or San Francisco, USA)

- Computer Scientist — Video Color (Noida or Bangalore, India)

- Senior Software Engineer — UI Platform / Drover (San Jose, USA)

- Software Engineer — Video Formats (San Jose or Seattle, USA)

- Software Automation Engineer — Video Formats (San Jose or Seattle, USA)

- Senior Software Engineer — Premiere Pro (San Jose or Seattle, USA)

- Software Engineer — Video Render Technology (Noida or Bangalore)

- Senior Product Marketing Manager — Digital Video and Audio (San Jose or Seattle, USA)

Roto Brush Rocks

The newly upgrades After Effects Roto Brush (see previous), now available in beta form, helps enable wondrous things:

And here it’s used in crafting a little salty political fun:

Roto Brush 2: Semantic Boogaloo

Back in 2018 I wrote,

Wanna feel like walking directly into the ocean? Try painstakingly isolating an object in frame after frame of video. Learning how to do this in the 90’s (using stone knives & bear skins, naturally), I just as quickly learned that I never wanted to do it again.

Happily the AE crew has kept improving automated tools, and they’ve just rolled out Roto Brush 2 in beta form. Ian Sansevera shows (below) how it compares & how to use it, and John Columbo provides a nice written overview.

In this After Effects tutorial I will explore and show you how to use Rotobrush 2 (which is insane by the way). Powered by Sensei, Roto Brush 2 will select and track the object, frame by frame, isolating the subject automatically.

VFX: “Impossible Videos”

Former Google intern Kevin Lustgarten produces some delightful sleights of hand. Enjoy!

I will not be trying to replicate his Jedi push-up technique anytime soon!