That’s my core takeaway from this great conversation, which will give you hope.

Slack & Flickr founder Stewart Butterfield, whose We Don’t Sell Saddles Here memo I’ve referenced countless times, sat down for a colorful & wide-ranging talk with Lenny Rachitsky. For the key points, check out this summary, or dive right into the whole chat. You won’t regret it.

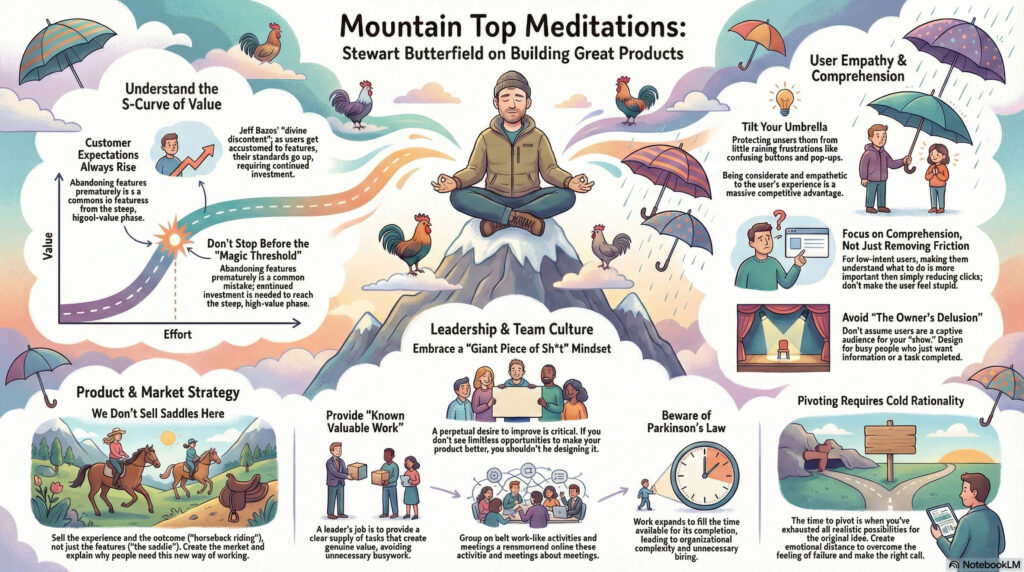

Visual summary courtesy of NotebookLM:

(00:00) Introduction to Stewart Butterfield

(04:58) Stewart’s current life and reflections

(06:44) Understanding utility curves

(10:13) The concept of divine discontent

(15:11) The importance of taste in product design

(19:03) Tilting your umbrella

(28:32) Balancing friction and comprehension

(45:07) The value of constant dissatisfaction

(47:06) Embracing continuous improvement

(50:03) The complexity of making things work

(54:27) Parkinson’s law and organizational growth

(01:03:17) Hyper-realistic work-like activities

(01:13:23) Advice on when to pivot

(01:18:36) The importance of generosity in leadership

(01:26:34) The owner’s delusion