The Flavawagon rides again—emotionally safely! 😀

Context: 25 years ago she helped me flame the original Flav. https://t.co/dxukPMwd3q pic.twitter.com/oLBd3t4E7T

— John Nack (@jnack) May 19, 2026

The Flavawagon rides again—emotionally safely! 😀

Context: 25 years ago she helped me flame the original Flav. https://t.co/dxukPMwd3q pic.twitter.com/oLBd3t4E7T

— John Nack (@jnack) May 19, 2026

Here’s a great little one-minute explainer, featuring a couple of fun examples I hadn’t yet seen:

This is such a wild, game-changing feature:

Gemini Omni is a major leap in world understanding & multimodal editing! It can take photos, video & audio and build entirely new scenes. Over time it’ll be able to handle any input & any output – starting w/ video

You can even give it your own videos & iterate on your ideas: pic.twitter.com/VrHPJKRJXH

— Demis Hassabis (@demishassabis) May 19, 2026

I think Carlos gets it exactly right: “I think many are focusing on the wrong aspect of the Gemini Omni model when comparing it to Seedance 2.0, since conceptually they are entirely different things. This is a model for editing videos (like Nano Banana) like we’ve never had before!“

Creo que muchos están enfocando mal el modelo de Gemini Omni al compararlo con Seedance 2.0 cuando conceptualmente son cosas distintas.

Este es un modelo para editar vídeos (a la Nano Banana) como nunca antes habíamos tenido! pic.twitter.com/eaqngSnbCD

— Carlos Santana (@DotCSV) May 19, 2026

i don’t think you understand how insane omni is pic.twitter.com/wvj4B4a59B

— Sam Sheffer (@samsheffer) May 19, 2026

Editing videos is where Gemini Omni Flash really shines. It is so incredibly capable.

> Make it New Year’s Eve with fireworks. Update the clock

London launched the fireworks early. https://t.co/cTGMPbT3tZ pic.twitter.com/c3Kh1y2KO5

— fofr (@fofrAI) May 19, 2026

I need Omni fireflies in @AdobeFirefly (Yo dawg…) https://t.co/BqvcTPo905

— John Nack (@jnack) May 19, 2026

I’m so pleased to be playing a very small role in bringing breakthrough video transformation to the world. Check out the new Gemini Omni:

The team writes,

We’re introducing Gemini Omni, where Gemini’s ability to reason meets the ability to create. Omni is our new model that can create anything from any input — starting with video. With Omni, you can combine images, audio, video and text as input and generate high-quality videos grounded in Gemini’s real-world knowledge. You can also easily edit your videos through conversation.

Today, we’re rolling out the first model in the Omni family: Gemini Omni Flash, to the Gemini app, Google Flow and YouTube Shorts. In time we will support output modalities like image and audio.

Conversational video editing is the real breakthrough:

Check it out & let us know what you think!

I keep finding myself thinking of this observation from Paul Graham:

“In preindustrial times most people’s jobs made them strong. Now if you want to be strong, you work out. So there are still strong people, but only those who choose to be. It will be the same with writing. There will still be smart people, but only those who choose to be.“

To reiterate from a previous post, quoting Keep the Robots Out of the Gym:

Think very carefully about where you get help from AI.

I think of it as Job vs. Gym.

- If we’re working a manual labor job, it’s fine to have AI lift heavy things for us because the actual goal is to move the thing, not to lift it.

- This is the exact opposite of going to the gym, where the goal is to lift the weight, not to move it.

He argues for identifying gym tasks (e.g. critical thinking, problem solving), and for those use just your brain (with minimal AI assistance, if any).

My primary metric for this is whether or not I am getting sharper at the skills that are closest to my identity.

As I often said back in the day, Google’s longstanding mission is to “organize the world’s information and make it useful.” A lot of that information is photographic, and a lot of that information is private; hence the value and power of Google Photos. It knows (with your blessings) who’s who, what places are important, and so on.

Now Nano Banana can leverage that info to make fun and beautiful things on your behalf.

Since you can already organize and label groups of people and pets in your library, those labels provide the context that Gemini needs to make your images feel truly yours…

With those labels in place, you can simply ask Gemini to “create a claymation image of me and my family enjoying our favorite activity” and Gemini can generate that specific image for you automatically. You can also experiment with different styles like watercolors, charcoal sketches or oil paintings. You can turn a quick idea into a custom creation, saving you the trouble of searching for, downloading and re-uploading files just to see a concept come to life.

I love this kind of simple, scrappy creativity,:

Google Earth now allows importing ANY 3D model, so I used @tripoai on @fal to get Godzilla into Tokyo, and my Chrome extension to transform it into a movie scene pic.twitter.com/150iiy5UjX

— Blendi (@BlendiByl) May 13, 2026

It’s insane what we can do now—from object removal to lighting changes—that was simply out of the question even a year ago.

Check out this little progression of edits, starting with the newly enhanced Generative Fill in the Photoshop beta, followed by a couple of steps of Remove, followed by a pass with Vividon & a few tweaks in Camera Raw (running inside PS):

Photoshop GenFill + https://t.co/FoIY1oy8vJ relighting = Magic #F35 #MoffettField pic.twitter.com/4JM7lQJnt9

— John Nack (@jnack) May 12, 2026

Nutty & I’m here for it. Per PetaPixel,

Co-founder and Chief Innovation Officer Marcus Kurn adds that the ability to deliver two or three lighting variations alongside every final image is a real differentiator: “once you start delivering two or three lighting variations with every final image, your clients will never want to go back.”

“No prompting, no friction. Just incredible results.”

As I mentioned back in January, Vividon offers new generative relighting tech that promises amazing realism & identity preservation:

Vividon places every relight on its own Photoshop layer. Adjust opacity, change blend modes, paint in or out exactly what you want, or remove it entirely. Your original always stays untouched.

Check out a 10s demo below, and visit their site for a more interactive preview:

And here’s a full 2-minute tour:

“People will forget you said, people will forget what you did, but people will never forget how you made them feel.” — Maya Angelou

I’ve reflected on this maxim countless times over the last couple of years, as I’ve considered the relationships I want with AI—particularly with notional creative partners. I want a partner who cares—who (which?) actually takes the time to get to know me, asking thoughtful questions, noodling on answers, and genuinely taking my feedback to heart.

I thought of this while listening to Stewart Brand talking to Ezra Klein the other day. Check out this poetic & provocative passage:

Well, it wound up that, basically, most of the book is Chapter 2, “Vehicles.” And the land vehicle that humans have used for 6,000 years is a horse, and the horse takes a lot of maintenance.

I’ll read something here from the book, if I may. There’s this philosopher named Albert Borgmann who wrote:

You cannot remain unmoved by the gentleness and conformation of a well-bred and well-trained horse — more than a thousand pounds of big-boned, well-muscled animal, slick of coat and sweet of smell, obedient and mannerly, and yet forever a menace with its innocent power and ineradicable inclination to seek refuge in flight, and always a burden with its need to be fed, wormed and shod, with its liability to cuts and infections, to laming and heaves. But when it greets you with a nicker, nuzzles your chest and regards you with a large and liquid eye, the question of where you want to be and what you want to do has been answered.

And I end with: “I wonder if that might come again someday — a vehicle that cares back.”

Side note: “Macrófago” is 100% the best word I’ve learned all week.

Sincitium is finally here.

We are pleased to present our latest piece: a concept trailer created specifically for the @runwayml Big Pitch Contest. For this project, we wanted to explore a completely different aesthetic from our usual studio style, and this film is the result of… pic.twitter.com/FHKkZWjjJg

— Contanimation (@Delachica_) May 4, 2026

This is the first time I can recall watching a genuine narrative (not a handful of gee-whiz demo shots) made with AI & not really caring about the production details. We’re turning the inevitable corner where it’s just the quality of ideas & narrative that’ll matter—not so much how the proverbial sausage was made.

WE FOUND SOMETHING IN… [THE DEAD MALL]

Seedance 2.0 Omni-ReferenceA girl gang and their scooter storms into an abandoned mall at 2 a.m. Inside, they stumble on something that has no business being there. Instead of running, they stay, and what follows spirals into total,… pic.twitter.com/fC25Q24w8H

— DAN · MXVDXN (@mxvdxn) May 1, 2026

Is it still brainrot if it’s really skillfully done, like several of these clip-bombing bits from FuruFuru? Check it out & be the judge:

I am obsessed with this Japanese man using AI video to put himself into movies

(he’s on IG at @ai_am_furufuru) pic.twitter.com/ePeVPkpEG4

— Justine Moore (@venturetwins) May 3, 2026

Tap the pencil icon on any clip to insert objects directly into videos or remove elements, without changing anything else:

You can also draw or annotate on an image. Flow understands your doodles and incorporates them into your final frame. You can doodle directly in Flow instead of turning to a separate editing app.

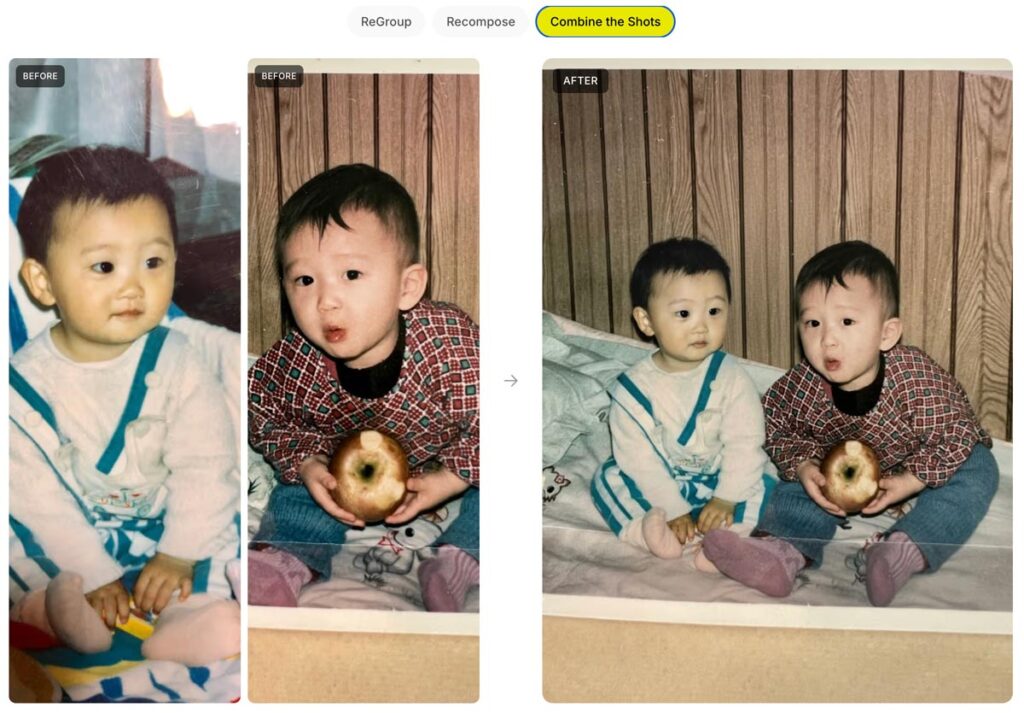

Speaking of changing angles in photos & video, Google Flow now enables changing camera angle and motion in existing clips:

A couple more examples:

Get some fresh perspective from our amazing teammates in research:

Today we are announcing a new approach to fix scene alignment after a photo was taken. Our method, now available as part of the Auto frame feature in Google Photos, uses machine learning (ML) models to understand the scene and its spatial layout and uses generative AI to imagine the photo from that new perspective. In contrast to classical photo editing, our method interprets a photo as a 3D scene — think of a real moment frozen in time — and change the camera position automatically within that space.

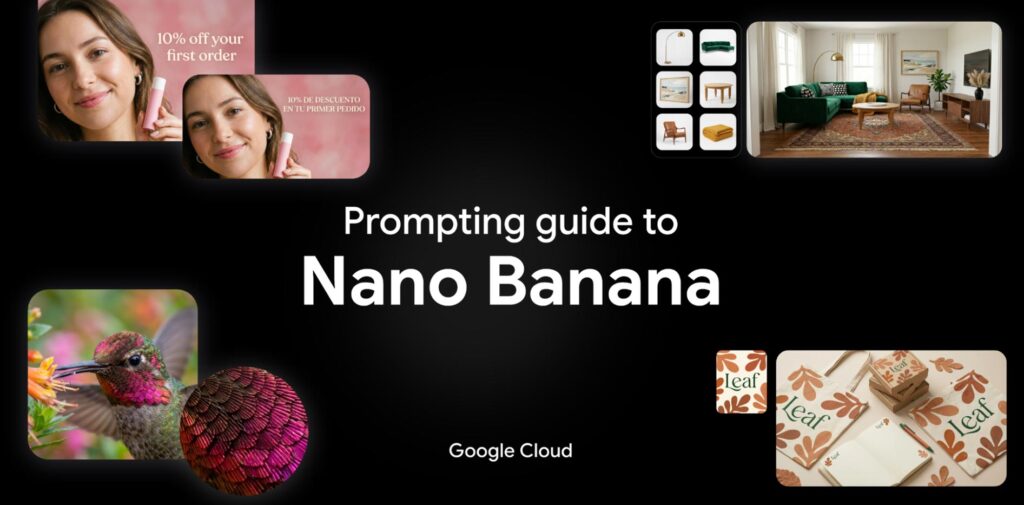

My new teammates have posted a series of detailed tips & tech specs (e.g. you can upload as many as 14 images together with a prompt). Check it out!

1. Introduction to Nano Banana

2. Technical Specs at a Glance

3. Best Practices for Prompting

4. Five Powerful Prompting Frameworks

5. The Creative Ecosystem

“Being early is the same as being wrong.” — Marc Andreessen, Vol. ~900

We put 3D into Photoshop nearly 20 years ago, and it got used by nearly 20 people total, lol. For many of the past several years, it was on the team’s “gotta throw overboard, as soon as we can find time” list—but happily that time was never found.

I am so glad to see this foundation now finding a meaningful niche, and I have high hopes for its generative future. Posing a person or thing directly is so much more intuitive than trying to precisely describe an outcome via prompt, and simple 3D manipulation + generative rendering could well deliver game-changing best of both worlds.

ついに新機能「オブジェクトを回転」が正式版に追加されました#PR #AdobePhotoshop #AdobePartner@creativecloudjp pic.twitter.com/f93OYx2hWo

— タマケン | デザイン (@DesignSpot_Jap) April 28, 2026

Just like it says on the tin. Check it out:

Photoshop is the most powerful way to use Nano Banana 2

In photoshop you can sketch and control exactly where everything goes in your nano banana 2 generation

Here’s how I’ve been using it: #AdobeFireflyAmbassadors #Ad #AdobePartnerModels pic.twitter.com/c7YzV55JNS

— Allen T. (@Mr_AllenT) April 6, 2026

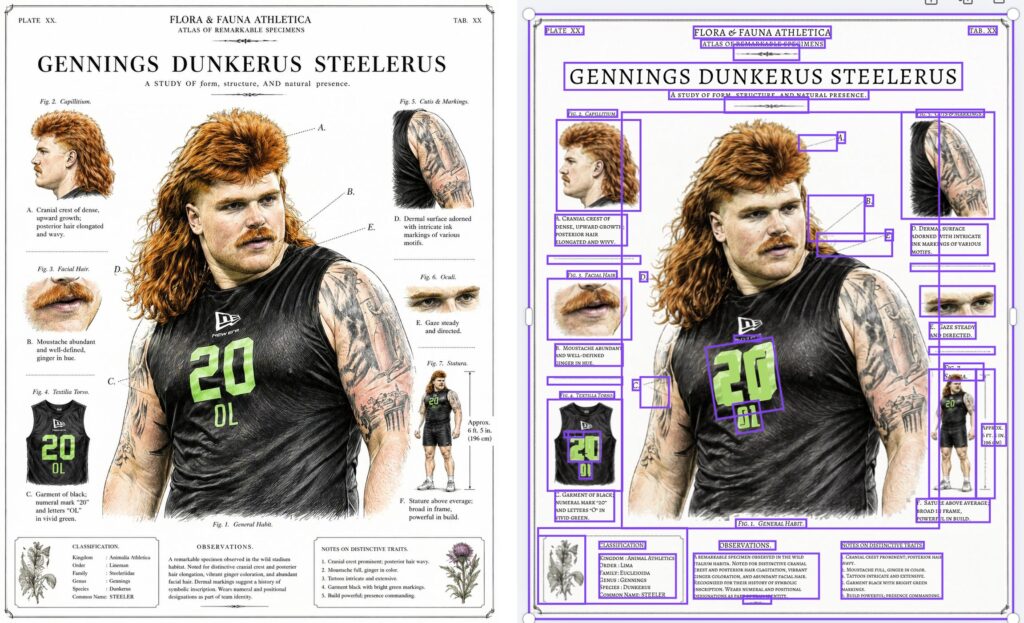

As generative imaging models like Nano Banana get increasingly adept at rendering text-heavy layouts, the ability to convert those layouts into native text/image compositions is of course hugely valuable for editing. Check out Canva’s new Magic Layers feature:

Tus Posters con GPT Images 2.0 por fin son 100% editables

Con Canva puedes separar las capas y personalizar cada texto o imagen. Se acabó el conformarse con lo que te dé la IA: ahora el diseño es 100% tuyo

Te explico cómo hacerlo pic.twitter.com/UudG6Kk4zP

— ImPaul (@impaulxyz) April 26, 2026

I couldn’t resist trying it out with a silly infographic I made using the new ChatGPT image model, and dang if it didn’t do a pretty a great job:

Man, it must be nearly 20 years ago that we started envisioning drag-and-drop-simple composition and compositing in Photoshop—back when gradient-domain painting & blending was the emerging hotness. After plenty of false starts, could these simple interaction patterns finally become mainstream? Maybe! I must know more of this witchcraft:

Do you like image editing? Don’t like prompt engineering? Want to see what a giraffe-duck hybrid looks like?

If you answered yes at least once, you may like our new #SIGGRAPH2026 paper: LooseRoPE, which presents a new, prompt-free way to edit images using simple visual cues pic.twitter.com/JMzMDHJ9wE— Etai Sella (@etai_sella) April 23, 2026

You can now check if a video was edited or created with Google AI directly in the Gemini app.

Just upload a video and ask something like, “Was this generated using Google AI?” Gemini will scan for the imperceptible SynthID watermark across both the audio and visual tracks and use its own reasoning to return a response that gives you context. For example, it might say: “SynthID detected within the audio between 10-20 secs. No SynthID detected in the visuals.”

Uploaded files can be up to 100 MB and 90 seconds long.

Check out this super cool mashup between Google Maps & my new product, Veo (video generation):

280 billion Street View images + generative AI = Maps Imagery Grounding.

At #GoogleCloudNext, we announced that brands can now generate beautiful AI visuals, all anchored in Street View.

For example, when storyboarding, filmmakers can visualize a scene – like a spaceship taking… pic.twitter.com/epwc0GvAm2

— Miriam Daniel (@miriamkdaniel) April 22, 2026

The team writes,

With Maps Imagery Grounding, a film studio can use a laptop to quickly visualize a scene at a specific place, like Washington Square Park in New York City—before scouts ever set foot on set. It’s easy to use: just type a prompt like “generate an image of a futuristic spaceship hovering in front of the Washington Square Arch” into the Gemini Enterprise Agent Platform and enable grounding with Google Maps Imagery in settings. In seconds, you can storyboard your creative vision with an accurate image—and you can even use Veo to animate the scene.

Check out this cool little UI from Flora:

Get every angle from one product shot. Camera control rotates around any image in a full 360. Use it on PDPs, campaign stills, lifestyle, whatever you shot last week.

Now live in FLORA. pic.twitter.com/AqmxlAnGCZ

— FLORA © (@floraai) April 21, 2026

I had no idea! And yet here “I” am, thanks to this new-to-me feature. In at least this first test, the visual likeness is very good, the gestures are a little off, and the voice is that of someone else (not shocking, as the creation flow asked me to read aloud only a couple of numbers):

Spending four minutes listening to Diplo’s thoughts on how art will be made going forward, and specifically on the value of quirky, messy, world-experiencing humans will be a good use of your time, I promise. The machine needs us ghosts.

if you are a creative you need to adapt or just like give up and become an uber driver until everyone has a waymo. I know it’s not cool or classy to speak like this but i’m not gonna candy coat the future – it is what it is . sorry for bad new’s my purist . there will always need… https://t.co/SXswII51wv

— diplo (@diplo) April 14, 2026

I’ve been sending this video to friends & family to explain what the heck it is I actually, y’know, do for a living. (It’s somehow related to enabling all this!)

Here’s a good summary from Gemini:

Nano Banana + Adobe tech FTW! Here’s a quick look:

And here’s a deeper dive:

Here’s a practical, down-to-earth application of AI from my old teammates Dana & co.:

Phota—about which I expressed some initial misgivings, given its ability to rewrite memories—has launched Phota Studio & their API. From what I can tell, it builds upon a Nano Banana foundation and adds personalization that relies on uploading dozens of images of each individual in order to maximize identity preservation:

With Phota, for the first time, you can generate, edit, and enhance photos while keeping your identity intact, every time.

We’re not building a generic foundation model. We build personal models about you, and about the people and pets around you. At the center are profiles, built from your personal album that learn the details of your appearance that make you recognizable as yourself: how you smile, your eye color, and how your face looks from different angles. Your personal model is private and only used by you.

Today, we introduce Phota Studio and Phota API, powered by our photography model that brings flagship image model capabilities, personalized to you.

With personalization, an image model stops being just playful and starts becoming useful for photography.

With Phota Studio, you… pic.twitter.com/UFOW32Vpvh

— Phota Labs (@PhotaLabs) March 26, 2026

Here’s a quick thread in which I tried inserting myself into a couple of images, using both Phota’s model (which depended on my uploading 30+ images of myself) and just Nano Banana straight out of the Gemini app:

Currently having fun Phota-bombing historical events in @PhotaLabs, which mixes their custom, identity-optimized model with @NanoBanana: pic.twitter.com/f3atvLkbxM

— John Nack (@jnack) April 6, 2026

I love seeing progress like this: upload a product pic, convert it to 3D, and photograph it on a virtual set:

Your next 3D photo shoot will be done with AI

3D Scenes generates full environments from any image

→ Place your objects in the scene

→ Move the camera like a real shoot

→ Consistent lightning and detail across every angleAvailable now on Freepik pic.twitter.com/blLN6fN1YW

— Freepik (@freepik) March 26, 2026

Go from 2D to a 3D

Upload your product photo → AI builds the scene around it → navigate freely in a 3D space

Rotate, zoom and explore every angle pic.twitter.com/waJf70Bdmn

— Freepik (@freepik) March 26, 2026

“Now with more distractions” isn’t usually the kind of thing one would tout—but as you’ll see, it’s just the kind of smarts people want for clean-up work:

Photoshop’s Remove Tool is getting a HUGE upgrade with more distractions.

A LOT more! pic.twitter.com/EYHp3Dilmm

— Howard Pinsky (@Pinsky) March 27, 2026

Here’s a fun, ultra-simple way to turn an image (or just a prompt) into a short, multi-shot narrative:

Introducing the Multi-Shot App. An easy way to go from a simple prompt to a thoughtfully crafted scene. All with dialogue, sound effects, intentional cuts, pacing and cinematic framing. Start from an image or go purely Text to Video for total creative exploration. Available now… pic.twitter.com/ek5uuuVf06

— Runway (@runwayml) March 26, 2026

Just for fun I fed it this image…

…and this prompt (based on an all-too-true story):

A family of Lego people and their dog gaze around Yosemite’s most iconic vista, then reminisce about that time they got stuck there in the snow in their VW van, expressing hope that they don’t get stuck again!

Check out the results:

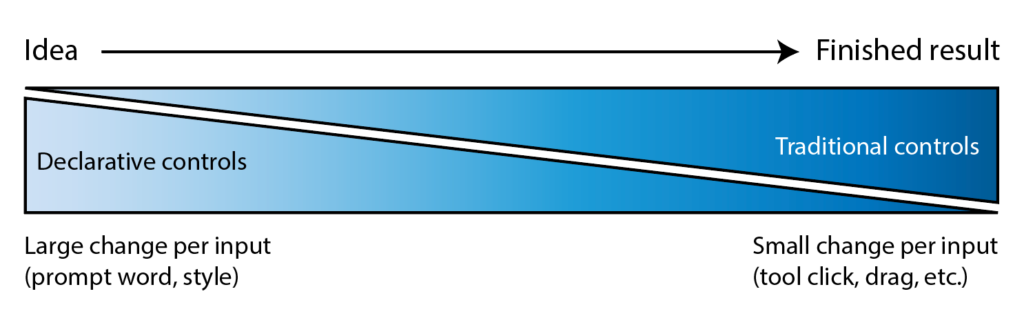

I’ve long quoted James Ratliff, the super sharp designer behind Adobe’s Project Graph (who’s recently decamped to Figma), in nicely phrasing how the process of generating & refining ideas generally starts broad/declarative (searching, prompting) and moves towards fine-grained methods (selecting, moving, etc.):

I see an increasing number of tool & model creators mixing modalities—even in the Gemini Super Bowl ad featuring a mom & daughter drawing a simple circle to show where they’d like to add a dog bed.

I’m eager to check out Lovart’s take on the possibilities, especially for animation:

⚡️ New on Lovart: Move Object

→ Select any object with rectangular or lasso tool

→ Move it wherever you want

→ Prompt optional modifications

→ One clean, consistent imageNo masks. No layers. No re-roll. pic.twitter.com/Sw800icnsu

— LovartAI (@lovart_ai) March 25, 2026

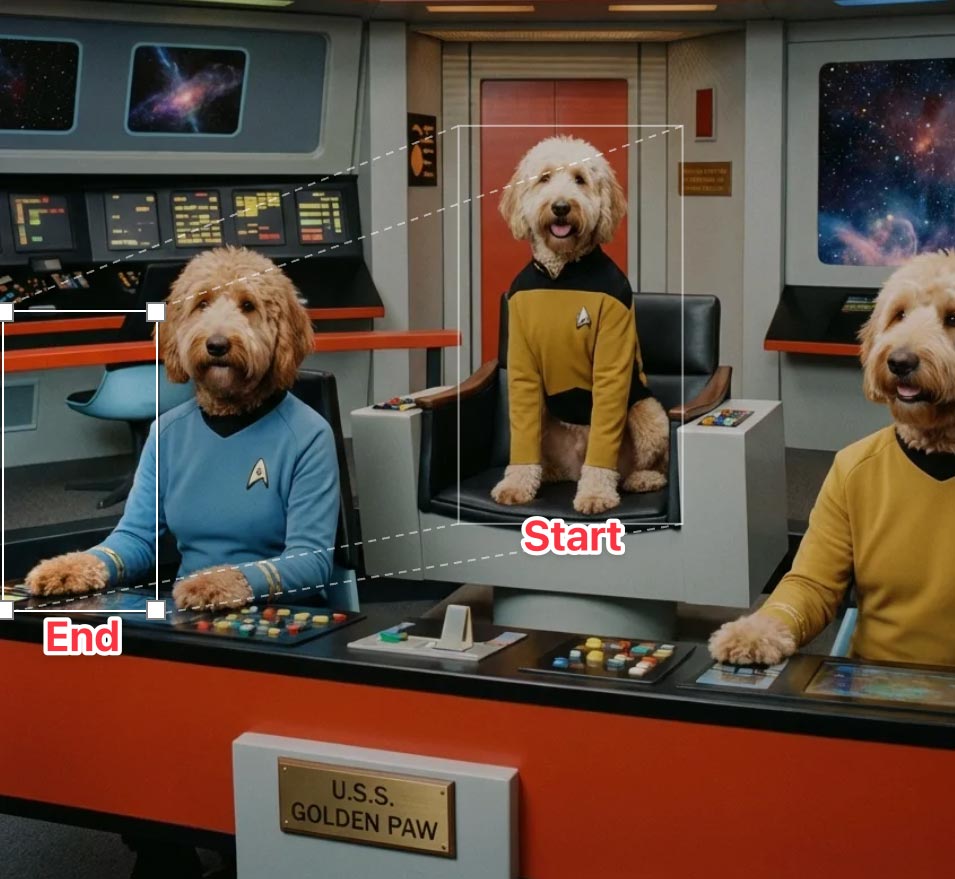

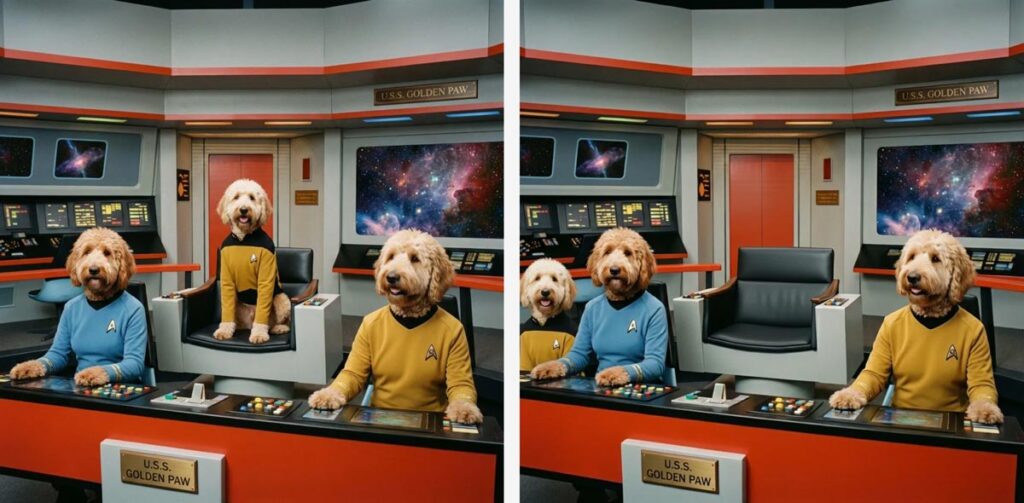

Update: Here’s a look at the UI, in which you can move & scale the selection rectangle, as well as the before & after images:

“3D scenes, websites, games, apps,” promises Spline. “Describe anything and Omma builds it for you in seconds.”

Omma combines code generation (LLMs), 3D AI mesh generation, and Image generation all in one place for you to build and ship. Deploy to production, assign custom domains, and more.

Ten years ago (!), the embryonic social app Peach suddenly blew up on the scene—only to molder shortly thereafter. Adam Lisagor tartly predicted that outcome right after Peach debuted:

I just joined Peach. Did you see that thing on Peach? Only teens use Peach these days. Nobody uses Peach anymore. Oh my god, remember Peach?

— Adam Lisagor (@adamlisagor) January 9, 2016

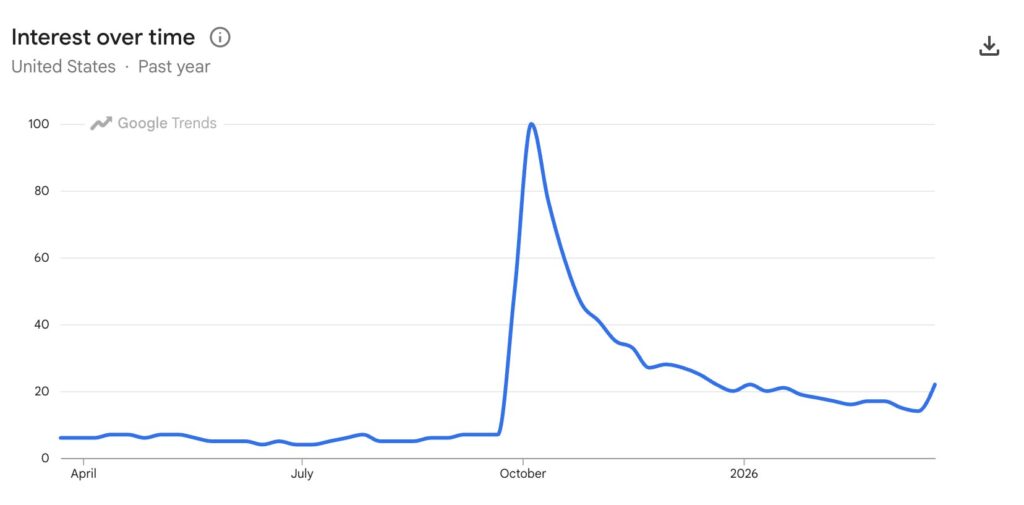

I’m reminded of this upon hearing that OpenAI has bailed out on Sora, which they launched just a few months ago. In a way I’m not surprised—check out how interest in the tech spiked & then rapidly cratered—except that just a couple of months ago Disney signed a billion-dollar deal to use it. ¯\_(ツ)_/¯

When can we get this (or equivalent) into Photoshop??

Okay it seems like @LumaLabsAI new uni-1 model is actually on a category of its own

You can give it one complex composition and its able to extract each layer as a new image generation

Are there any other models that can do this? https://t.co/COXdUvX4id pic.twitter.com/3w5quKoiFX

— Lucas Crespo (@lucas__crespo) March 23, 2026

On a conceptually (though not necessarily technically) related note, the LICA dataset may help model makers train layered generation:

Much of today’s AI-generated graphic designs look like slop because there is a lack of high-quality, open datasets. This space is a cluster of walled gardens (@figma , @canva , @Adobe ). We’ve built one of the largest graphic design dataset, 1.5 million compositions spanning… pic.twitter.com/YauAe7ugiD

— Priyaa (@pritopian) March 20, 2026

Speaking of spinning right ’round, check this out:

We just released Rotate Object in Photoshop (beta)

You can now rotate 2D images!

Then use Harmonize to add light and shadows, to blend it perfectly with the rest of the scene.

It’s like Turntable in Illustrator, but instead of vectors, it’s pixels in Photoshop! pic.twitter.com/85bvBIjCB9

— Kris Kashtanova (@icreatelife) March 12, 2026

Check out another view, from Paul Trani:

Game changer for compositors! Rotate an image as if it’s a 3D object in the #Photoshop beta! Interested?? pic.twitter.com/RUgsZZup7u

— Paul Trani (@paultrani) March 12, 2026

Five years ago, I spent an afternoon with a buddy watching Disco Diffusion resolve a weird, blurry, but ultimately delightful scene over the course of 15 minutes. Now Runway & NVIDIA are previewing generation that’s a mere ~90,000x faster than that. Ludicrous speed, go!!

A breakthrough in real-time video generation.

As a research preview developed with @NVIDIA and shared at @NVIDIAGTC this week, we trained a new real-time video model running on Vera Rubin. HD videos generate instantly, with time-to-first-frame under 100ms. Unlocking an entirely… pic.twitter.com/juafjvk0wm

— Runway (@runwayml) March 18, 2026

An AI paradox: as models get vastly more complex, interfaces can get vastly simpler. We can make computers conform to our reality—not the other way around.

Steve Jobs described exactly this evolution all the way back in 1981:

Structuring your prompt well turns out to be key in avoiding garbled text. As the presenter says, “It’s not about writing more. It’s about writing in the right order.” Check out this brief overview.

In this tutorial, you’ll see how to use Nano Banana Pro and Kling 3.0 Omni together to solve one of the most common pain points in AI product video: text that blurs, warps, or drifts mid-motion. We’ll walk through a practical workflow for maintaining legibility and visual consistency in product shots, so your labels, logos, and copy stay clean from the first frame to the last.

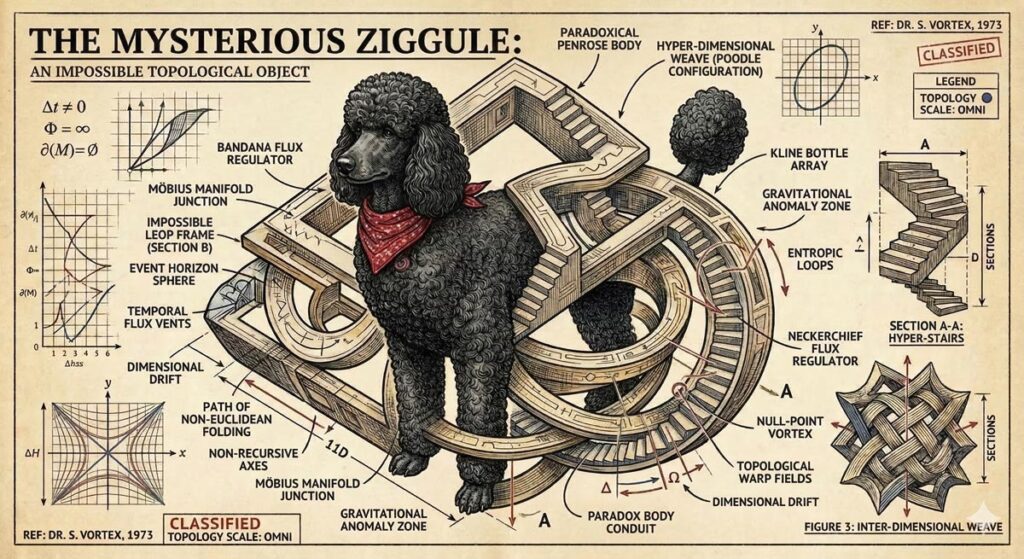

Long dog walks are for nothing if not visualizing whatever silliness pops into my head—which today happened to be our puppy Ziggy becoming an impossible object called a “Ziggule.”

I shared this with my cousin Alicia, who does a tremendous amount of work sheltering & rescuing dogs in Austin, and she requested a portrait of their current foster pooch (Tesseract). I was of course all too happy to oblige:

As it happens, folks at Google have had the same idea, and they’ve been putting Nano Banana to work helping zhuzh up pics of shelter pets in hopes of helping them find their forever homes. Let’s hear it for using AI & old-fashioned human creativity for good!

Photos play a big role in pet adoption.

We’ve teamed up with shelters across the country to give rescue pets glamorous headshots that show off their personalities, made with Nano Banana Pro.

Take a look below and contact our partner shelters for adoption inquiries pic.twitter.com/Lh565trjgR

— Google Gemini (@GeminiApp) January 21, 2026

As you’ve likely heard me say, I’ve gotten psyched up too many times about AI video-editing tech that fell short of its ambitions—but I’m hoping that this work from Adobe & Harvard collaborators can deliver what it describes:

We present Vidmento, an interactive video authoring tool that expands initial materials and ideas into compelling video stories through blending captured and generative media. To preserve narrative continuity and creative intent, Vidmento generates contextual clips that align with the user’s existing footage and story.

Per the site, Vidmento should enable:

Oh boy. 🙂 (But, like, holy crap—compare this to the hot garbage we were getting less than a year ago!)

Here’s the video containing those images. Overall it’s kinda good—kinda! pic.twitter.com/KOFBevbebU

— John Nack (@jnack) March 6, 2026

Among the misbegotten “Oh, everyone will love this—but rarely will anyone actually use it” AR demos of 2017 (right alongside “See whether this toaster fits on my counter!”), imagining restaurants plopping a 3D model onto your plate was always a banger. Leaving aside whether anyone would actually want or value that experience, the cost of realistically modeling dishes was prohibitive.

This new tech at least promises to take the grunt work out of model creation, turning a single photo into an AR-ready 3D asset (give or take a tine or two ;-)):

AR GenAI by AR Code is transforming the food industry. Creating an AR experience for a dish can now start with a single photo.

As shown in the video, a single dessert photo is converted into an AR-ready 3D model with realistic textures and depth. AR Code SaaS then instantly… pic.twitter.com/s1H5do1UUf

— Maxime Maisonneuve (@maximemaisonneu) January 25, 2026

I try not to curse on this blog, doing so maybe a dozen times in 20+ (!!) years of posting. But circa 2013-2017, when I saw what felt like uncritical praise for Adobe’s voice-driven editing prototypes, I called bullshit.

The high-level concept was fine, but the tech at the time struck me as the worst of both worlds: the imprecision of language (e.g. how does a normal person know the term “saturation,” and how does an expert describe exactly how much they want?) combined with the fragility of traditional selection & adjustment algorithms.

Now, however, generative tech can indeed interpret our language & effect changes—and in the case of Krea’s new realtime mode, in a highly responsive way:

introducing Voice Mode.

speak as you draw and get changes in real-time.

available now in Krea iPad. pic.twitter.com/c6mHHjupmW

— KREA AI (@krea_ai) March 2, 2026

Whether or not voice per se becomes a popular modality here, closing the gap between idea & visual is just so seductive. To emphasize a previously made point:

We simply have not started rethinking interactions from the grounds up.

So many possibilities wide open when you think of human – AI in micro feedback loops vs automation alone or classic back and forth. https://t.co/iVKb02SbdU

— tuhin (@tuhin) February 18, 2026

I couldn’t have contrived a better example of the power & pitfalls of generative imaging if I tried.

Here’s a pretty crummy cell phone picture I took yesterday from a moving train & then enhanced with a single prompt using Gemini. The results are incredible—if you don’t really care about the exact capacity of your jumbo jet! 🙂

The current state of AI-driven editing drives home the wisdom of that old Russian staying, “Trust… but verify.”

This also highlights the subtle treachery of AI photography: look how it shortened the 747! pic.twitter.com/Yga5oo1D0B

— John Nack (@jnack) February 27, 2026

See also my previously shared example, in which Nano Banana quietly upgraded this propeller-driven plane into a jet:

Testing fence removal on my son’s photo using @NanoBanana, @ChatGPTapp, and @bfl_ml.

They’re all impressive, but Nano tried to put jet engines on this prop plane, so I’m giving this round to ChatGPT. pic.twitter.com/DOvZQLT5H5

— John Nack (@jnack) December 23, 2025

When it rains, it pours: No sooner did I post about text->vector than I saw two new entrants in that space. The new Quiver AI is claimed to have “solved vector design with AI”:

Introducing @QuiverAI, a new AI lab and product company focused on frontier vector design.

We’ve raised an $8.3M seed round led by @a16z, with support from amazing angels and investors.

Our first model, Arrow-1.0, generates SVGs from images and text. It’s available now in… pic.twitter.com/mLoeM2UpGf

— Joan Rodriguez (@joanrod_ai) February 25, 2026

Here’s my first quick test, in which Quiver & Illustrator utterly smoke direct chat->vector output in Gemini & ChatGPT:

Testing text->vector in the new @QuiverAI vs. Adobe Illustrator and (yikes!) Gemini and ChatGPT. (Prompt: “A three-quarter view of a silver 1990 Mazda Miata.”) pic.twitter.com/MjTuFYLGQ3

— John Nack (@jnack) February 26, 2026

Meanwhile, check out what Recraft produced:

Impressive results from @recraftai! https://t.co/VbukNz0rtn pic.twitter.com/vaIN4ySQ4H

— John Nack (@jnack) February 26, 2026

Elsewhere, Hero Studio promises great image->SVG conversion. I’ve applied for access & am eager to take it for a spin:

You can now bring your images to life, just upload any image and it turns it into a clean and precise SVG. we’re using a custom model specifically trained for SVG recognition and generation. the results are insane pic.twitter.com/s6e4tJ4IWm

— Junior García (@jrgarciadev) February 25, 2026

When we launched Firefly three years ago (!), we talked up prompt-based vector creation. When the feature later arrived in Illustrator, it was really text-to-image-to-tracing. That could be fine, actually, provided that the conversion process did some smart things around segmenting the image, moving objects onto their own layers, filling holes, and then harmoniously vectorizing the results. I’m not sure whether Adobe actually got around to shipping that support.

In any case, Recraft now promises create vector creation directly from prompts:

V4 Vector is built for real design workflows.

Clean path structure.

SVG export.

Print-ready (300 DPI, CMYK).Generate → export → refine pic.twitter.com/XenDDSTjmd

— Recraft (@recraftai) February 23, 2026

Meanwhile Gemini promises SVG creation right out of the box. My previous attempts to use it produced results that were, um, impressionistic…

Nano Banana->SVG results can be… unique. 🙂 https://t.co/FuEgYZiL5T pic.twitter.com/9BwSqmsLmT

— John Nack (@jnack) November 20, 2025

…and based on what they’re showing vis-à-vis recent updates, I haven’t been in a hurry to try again:

“Generate an SVG of a pelican riding a car in France with a cat sitting beside it. Background has Eiffel tower.” pic.twitter.com/RjCnte4cky

— Oriol Vinyals (@OriolVinyalsML) February 19, 2026

I’ve really enjoyed collaborating with Black Forest Labs, the brain-geniuses behind Flux (and before that, Stable Diffusion). They’re looking for a creative technologist to join their team. Here’s a bit of the job listing in case the ideal candidate might be you or someone you know:

BFL’s models need someone who knows them inside out – not just what they can do today, but what nobody’s tried yet. This role sits at the intersection of creative excellence, deep model knowledge, and go-to-market impact. You’ll create the work that makes people realize what’s possible with generative media – original pieces, experiments, and creative assets that set the standard for what FLUX can do and show it to the world

— Create original creative work that pushes FLUX to its limits – experiments, visual explorations, and pieces that show what’s possible before anyone else figures it out

— Collaborate with the research and product teams from the start of training/product development to understand the core strengths of each new model/product and create assets that amplify and showcase these. You will also provide feedback to those teams throughout the development process on what needs to improve.