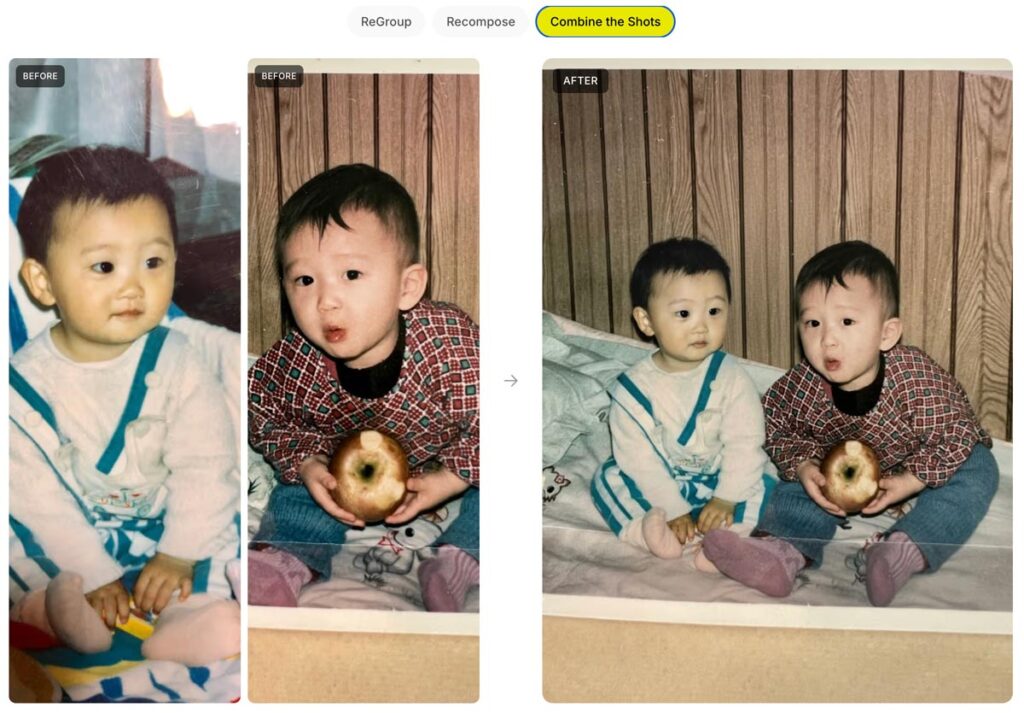

It’s insane what we can do now—from object removal to lighting changes—that was simply out of the question even a year ago.

Check out this little progression of edits, starting with the newly enhanced Generative Fill in the Photoshop beta, followed by a couple of steps of Remove, followed by a pass with Vividon & a few tweaks in Camera Raw (running inside PS):

Photoshop GenFill + https://t.co/FoIY1oy8vJ relighting = Magic #F35 #MoffettField pic.twitter.com/4JM7lQJnt9

— John Nack (@jnack) May 12, 2026

Nutty & I’m here for it. Per PetaPixel,

Co-founder and Chief Innovation Officer Marcus Kurn adds that the ability to deliver two or three lighting variations alongside every final image is a real differentiator: “once you start delivering two or three lighting variations with every final image, your clients will never want to go back.”