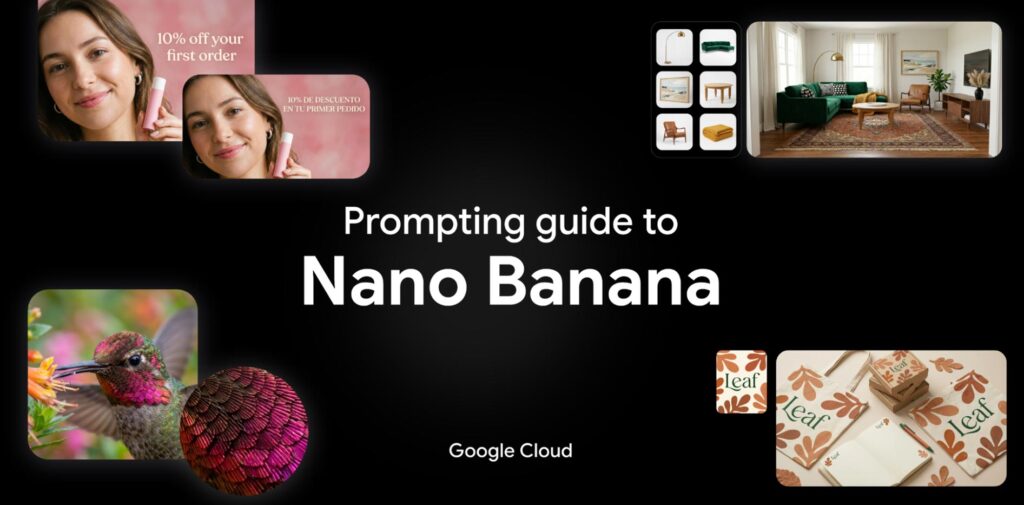

Hey gang—I am beyond delighted to say that I’m returning to Google, taking a Cloud AI PM role focusing on generative media!

As Paul Simon told us, “These are the days of miracles and wonder”—and I wonder at my amazingly good fortune getting to help shape these miracles.

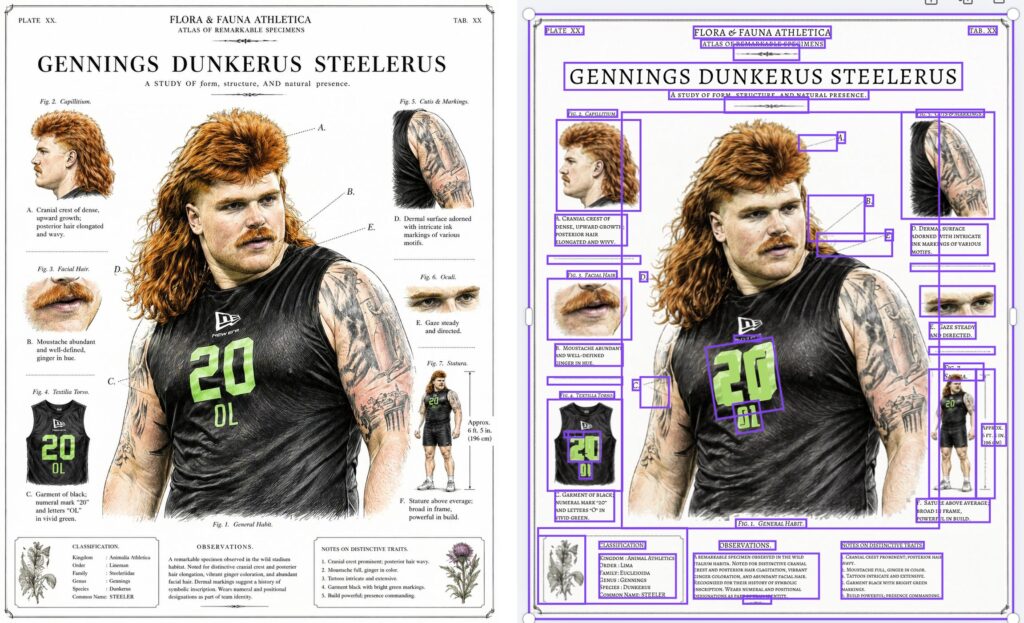

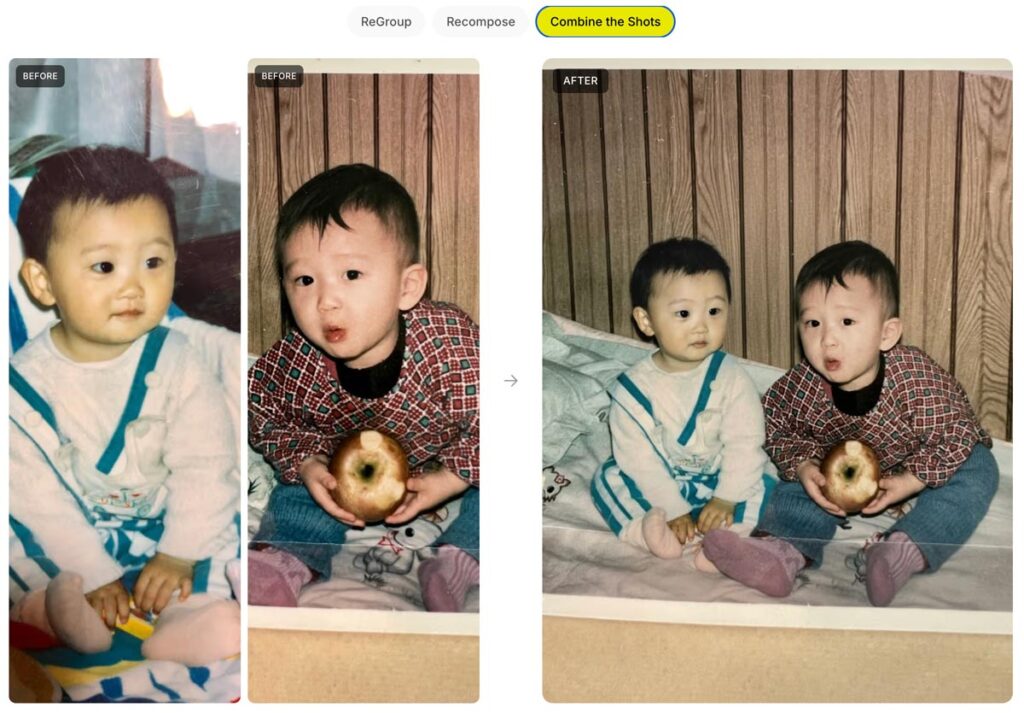

Ever since 2000, I’ve focused my PM career on “unblocking the light,” helping people make the world more beautiful and fun. From Photoshop to Google Photos to M365, I’ve loved learning what truly matters to creators. Nothing beats zeroing in on real needs, then marshaling some big giant brains to deliver everything from big breakthroughs to crafty little mint-on-the-pillow delights.

Returning from last fall’s Adobe MAX, I summarized attendees’ vibe as “Overwhelmed, But Optimistic.” Now the pressure—and privilege—is to turn that optimism into action.

There’s so much I don’t yet know about this role—but what I know for sure is that I can’t do it alone.

I know I need you.

As we all navigate this bewitching, bewildering time, let’s stick together. Please keep me honest, grounded in knowing just what you need—and what you don’t. That way I can advocate accordingly, helping Google focus on exactly what’ll benefit you most.

Questions & ideas for collaboration are always most welcome: [last name @ employer dot com]. And in the meantime I’ll keep sharing my most interesting finds on the ol’ blog—especially in the burgeoning AI/ML category.

And with that, friends, here we go!