Time is a flat circle…

Daring Fireball’s Mac 40th anniversary post contained a couple of quotes that made me think about the current state of interaction with AI tools, particularly around imaging. First, there’s this line from Steven Levy’s review of the original Mac:

[W]hat you might expect to see is some sort of opaque code, called a “prompt,” consisting of phosphorescent green or white letters on a murky background.

Think about how revolutionarily different & better (DOS-head haters’ gripes notwithstanding) this was.

What you see with Macintosh is the Finder. On a pleasant, light background, little pictures called “icons” appear, representing choices available to you.

And then there’s this kicker:

“When you show Mac to an absolute novice,” says Chris Espinosa, the twenty-two-year-old head of publications for the Mac team, “he assumes that’s the way all computers work. That’s our highest achievement. We’ve made almost every computer that’s ever been made look completely absurd.”

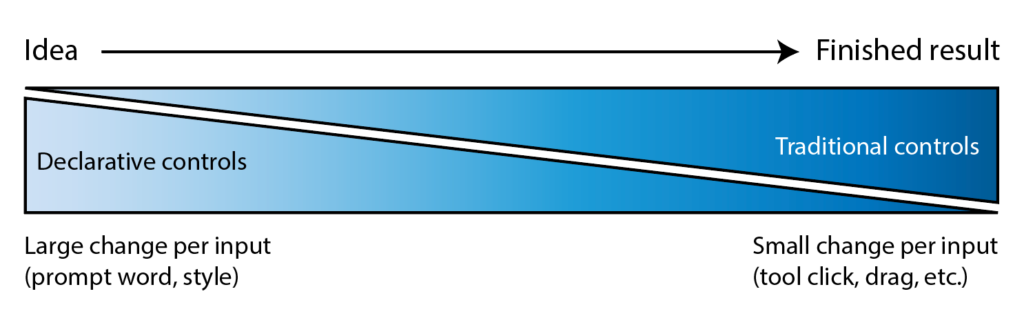

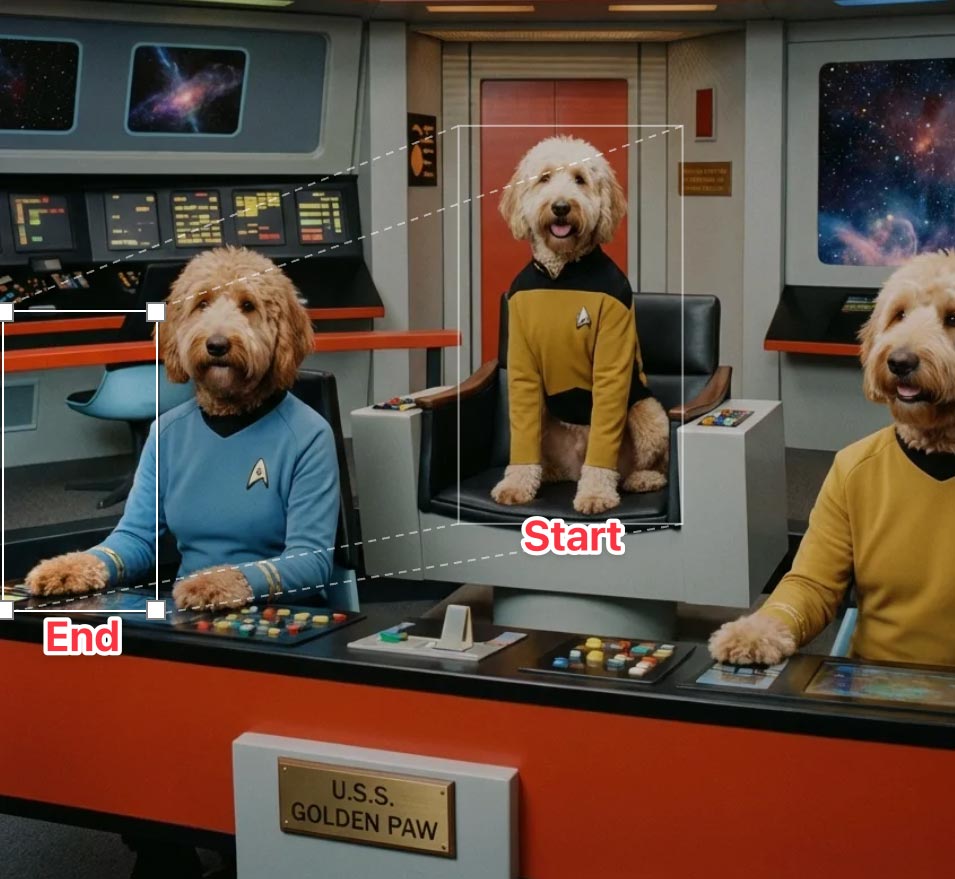

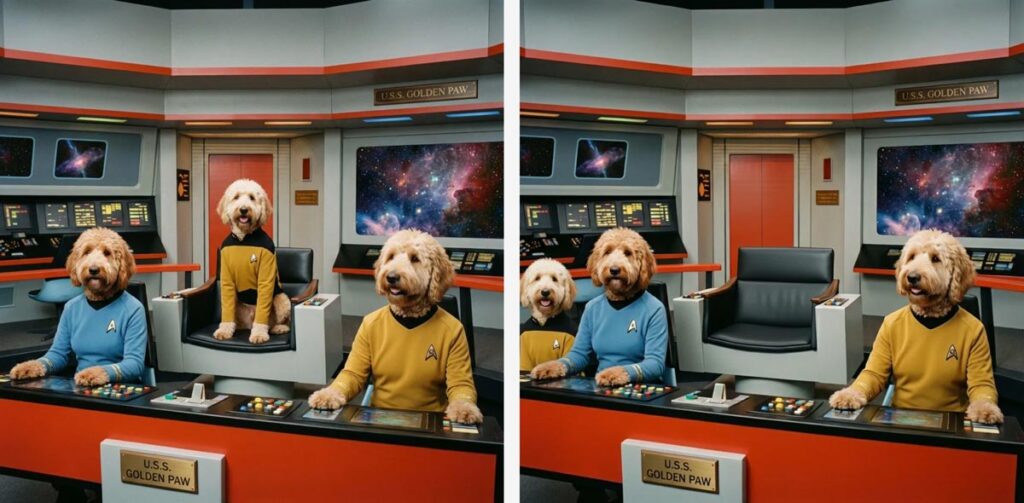

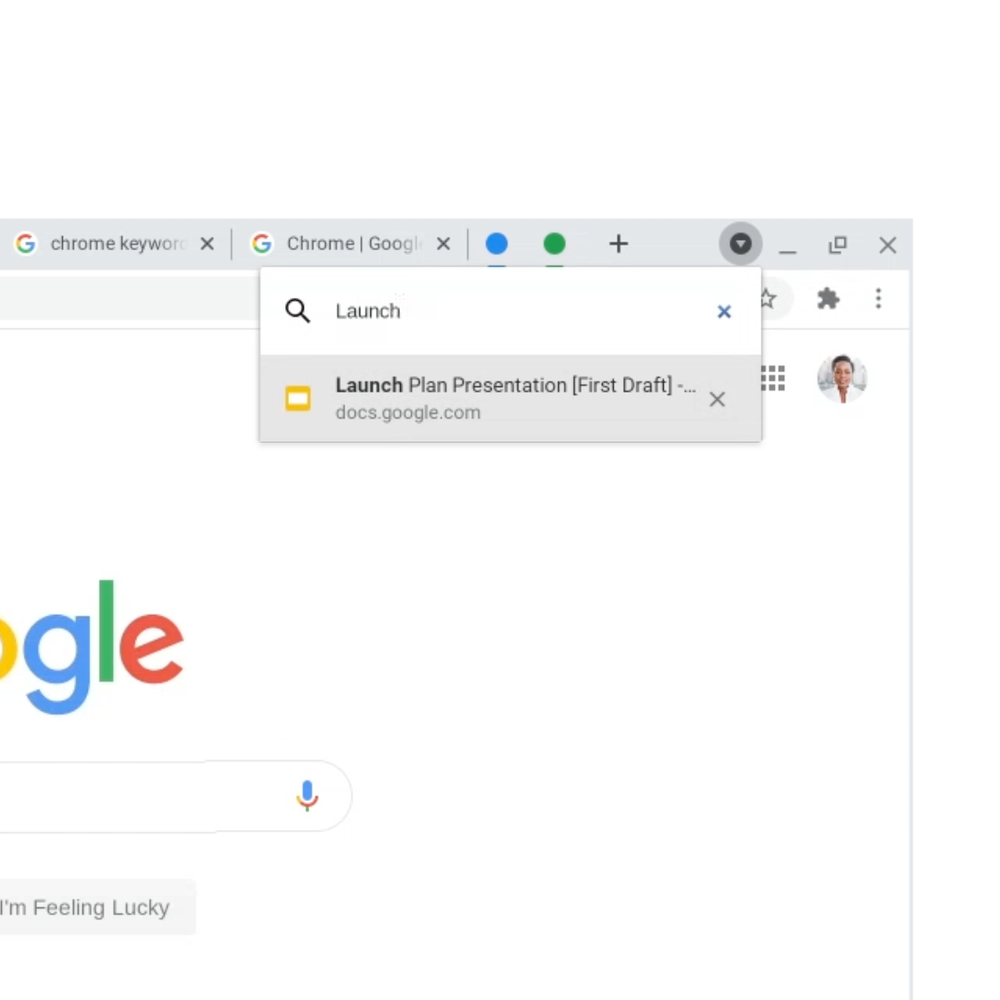

I don’t know quite what will make today’s prompt-heavy approach to generation feel equivalently quaint, but think how far we’ve come in less than two years since DALL•E’s public debut—from swapping long, arcane codes to having more conversational, iterative creation flows (esp. via ChatGPT) and creating through direct, realtime UIs like those offered via Krea & Leonardo. Throw in a dash of spatial computing, perhaps via “glasses that look like glasses,” and who knows where we’ll be!

But it sure as heck won’t mainly be knowing “some sort of opaque code, called a ‘prompt.'”