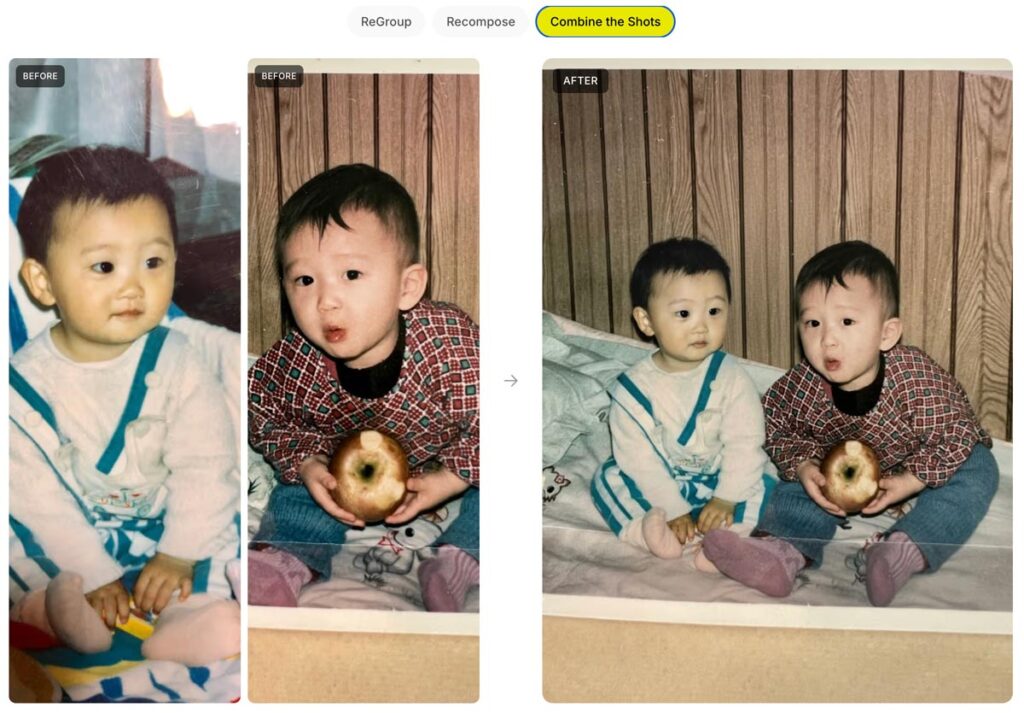

Nano Banana + Adobe tech FTW! Here’s a quick look:

And here’s a deeper dive:

Nano Banana + Adobe tech FTW! Here’s a quick look:

And here’s a deeper dive:

Call that logo Shai-Hulud, ’cause it’s one enormous worm.

NASA reintroduced its iconic 1975 ‘worm’ logo back in 2020, cool to see it in full glory on the side of an Artemis II rocket booster. Each letter is 6 ft 10” tall and altogether 25 ft long. The Exploration Ground Systems Team use a laser projector to tape off, then paint by hand. pic.twitter.com/JMIQUcApWw

— David McGillivray (@dmcgco) April 7, 2026

Here’s a practical, down-to-earth application of AI from my old teammates Dana & co.:

Hey gang—I am beyond delighted to say that I’m returning to Google, taking a Cloud AI PM role focusing on generative media!

As Paul Simon told us, “These are the days of miracles and wonder”—and I wonder at my amazingly good fortune getting to help shape these miracles.

Ever since 2000, I’ve focused my PM career on “unblocking the light,” helping people make the world more beautiful and fun. From Photoshop to Google Photos to M365, I’ve loved learning what truly matters to creators. Nothing beats zeroing in on real needs, then marshaling some big giant brains to deliver everything from big breakthroughs to crafty little mint-on-the-pillow delights.

Returning from last fall’s Adobe MAX, I summarized attendees’ vibe as “Overwhelmed, But Optimistic.” Now the pressure—and privilege—is to turn that optimism into action.

There’s so much I don’t yet know about this role—but what I know for sure is that I can’t do it alone.

I know I need you.

As we all navigate this bewitching, bewildering time, let’s stick together. Please keep me honest, grounded in knowing just what you need—and what you don’t. That way I can advocate accordingly, helping Google focus on exactly what’ll benefit you most.

Questions & ideas for collaboration are always most welcome: [last name @ employer dot com]. And in the meantime I’ll keep sharing my most interesting finds on the ol’ blog—especially in the burgeoning AI/ML category.

And with that, friends, here we go!

I love behind-the-scenes little insights like these. Click or tap as needed to see the full post:

NASA trained astronauts to take photos without being able to see what they were shooting. They bolted a camera to each astronaut’s chest, removed the viewfinder, and handed them pressurized gloves so thick they could barely feel the shutter button. Before each Apollo mission,… https://t.co/QS1bOKtNvo pic.twitter.com/tJxgRVlwJ3

— Anish Moonka (@anishmoonka) April 6, 2026

Phota—about which I expressed some initial misgivings, given its ability to rewrite memories—has launched Phota Studio & their API. From what I can tell, it builds upon a Nano Banana foundation and adds personalization that relies on uploading dozens of images of each individual in order to maximize identity preservation:

With Phota, for the first time, you can generate, edit, and enhance photos while keeping your identity intact, every time.

We’re not building a generic foundation model. We build personal models about you, and about the people and pets around you. At the center are profiles, built from your personal album that learn the details of your appearance that make you recognizable as yourself: how you smile, your eye color, and how your face looks from different angles. Your personal model is private and only used by you.

Today, we introduce Phota Studio and Phota API, powered by our photography model that brings flagship image model capabilities, personalized to you.

With personalization, an image model stops being just playful and starts becoming useful for photography.

With Phota Studio, you… pic.twitter.com/UFOW32Vpvh

— Phota Labs (@PhotaLabs) March 26, 2026

Here’s a quick thread in which I tried inserting myself into a couple of images, using both Phota’s model (which depended on my uploading 30+ images of myself) and just Nano Banana straight out of the Gemini app:

Currently having fun Phota-bombing historical events in @PhotaLabs, which mixes their custom, identity-optimized model with @NanoBanana: pic.twitter.com/f3atvLkbxM

— John Nack (@jnack) April 6, 2026

Heh—it’s fun to see the fruits of my former team’s efforts going to fun use: Google’s open-source MediaPipe framework enables body tracking, among many other things:

i made tetris but the board and pieces are attached to your body and it’s quite tiring to play pic.twitter.com/yEoA49igpX

— AA (@measure_plan) March 31, 2026

I love love love the attention to detail that Phil Lord and Christopher Miller brought to the film. Check out the lengths they & their crew went to on everything from devising rotating lights for the inter-ship tunnel (conveying constant rotation) to nailing film grain. And I love the exuberance & generosity of creators in sharing so many insights into design & process.

I love seeing progress like this: upload a product pic, convert it to 3D, and photograph it on a virtual set:

Your next 3D photo shoot will be done with AI

3D Scenes generates full environments from any image

→ Place your objects in the scene

→ Move the camera like a real shoot

→ Consistent lightning and detail across every angleAvailable now on Freepik pic.twitter.com/blLN6fN1YW

— Freepik (@freepik) March 26, 2026

Go from 2D to a 3D

Upload your product photo → AI builds the scene around it → navigate freely in a 3D space

Rotate, zoom and explore every angle pic.twitter.com/waJf70Bdmn

— Freepik (@freepik) March 26, 2026

“Now with more distractions” isn’t usually the kind of thing one would tout—but as you’ll see, it’s just the kind of smarts people want for clean-up work:

Photoshop’s Remove Tool is getting a HUGE upgrade with more distractions.

A LOT more! pic.twitter.com/EYHp3Dilmm

— Howard Pinsky (@Pinsky) March 27, 2026

I dug this quick tour from Robert Hranitzky, giving a peek behind the scenes on a recent project:

Here’s a fun, ultra-simple way to turn an image (or just a prompt) into a short, multi-shot narrative:

Introducing the Multi-Shot App. An easy way to go from a simple prompt to a thoughtfully crafted scene. All with dialogue, sound effects, intentional cuts, pacing and cinematic framing. Start from an image or go purely Text to Video for total creative exploration. Available now… pic.twitter.com/ek5uuuVf06

— Runway (@runwayml) March 26, 2026

Just for fun I fed it this image…

…and this prompt (based on an all-too-true story):

A family of Lego people and their dog gaze around Yosemite’s most iconic vista, then reminisce about that time they got stuck there in the snow in their VW van, expressing hope that they don’t get stuck again!

Check out the results:

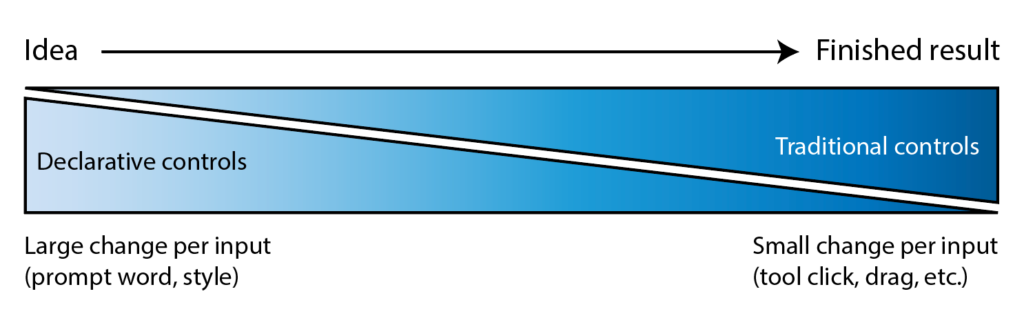

I’ve long quoted James Ratliff, the super sharp designer behind Adobe’s Project Graph (who’s recently decamped to Figma), in nicely phrasing how the process of generating & refining ideas generally starts broad/declarative (searching, prompting) and moves towards fine-grained methods (selecting, moving, etc.):

I see an increasing number of tool & model creators mixing modalities—even in the Gemini Super Bowl ad featuring a mom & daughter drawing a simple circle to show where they’d like to add a dog bed.

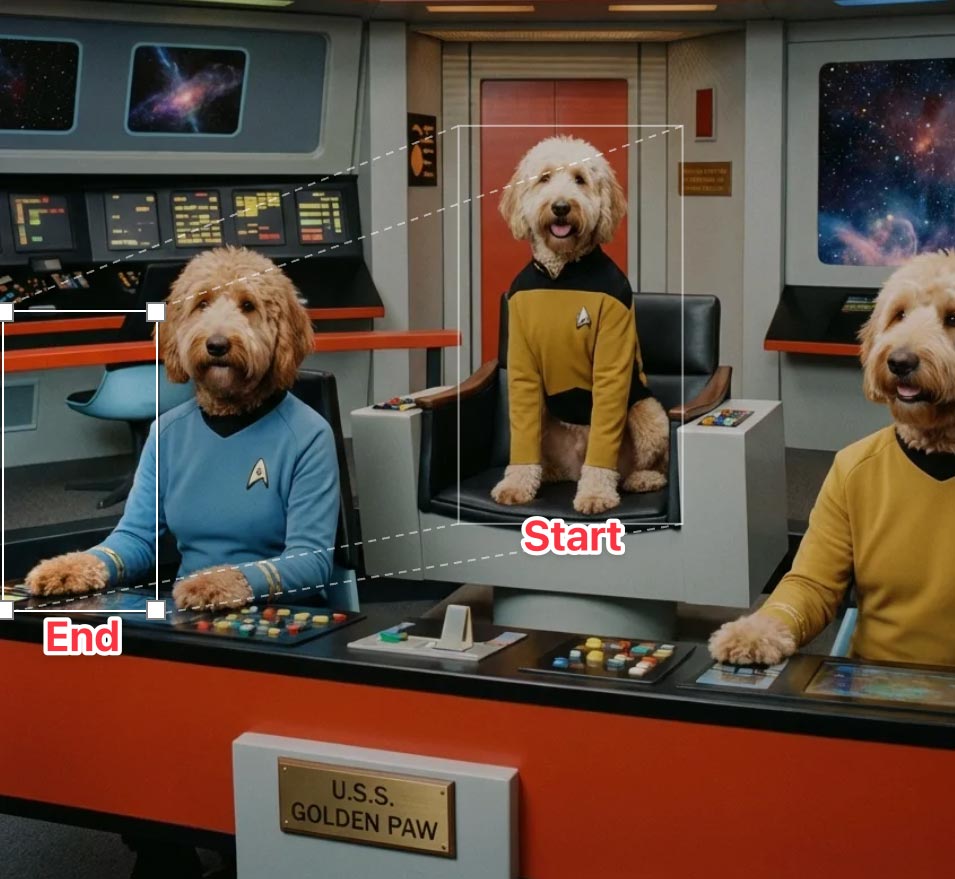

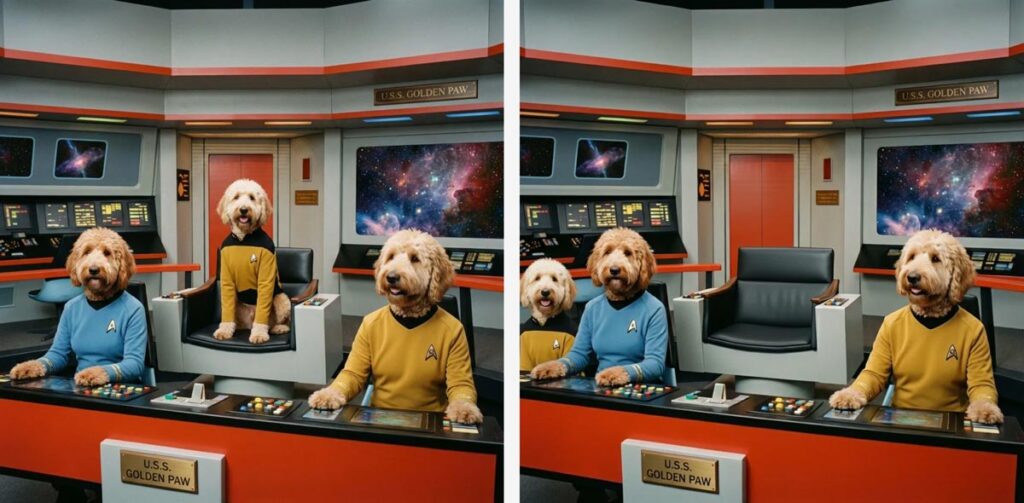

I’m eager to check out Lovart’s take on the possibilities, especially for animation:

⚡️ New on Lovart: Move Object

→ Select any object with rectangular or lasso tool

→ Move it wherever you want

→ Prompt optional modifications

→ One clean, consistent imageNo masks. No layers. No re-roll. pic.twitter.com/Sw800icnsu

— LovartAI (@lovart_ai) March 25, 2026

Update: Here’s a look at the UI, in which you can move & scale the selection rectangle, as well as the before & after images:

“3D scenes, websites, games, apps,” promises Spline. “Describe anything and Omma builds it for you in seconds.”

Omma combines code generation (LLMs), 3D AI mesh generation, and Image generation all in one place for you to build and ship. Deploy to production, assign custom domains, and more.

Ten years ago (!), the embryonic social app Peach suddenly blew up on the scene—only to molder shortly thereafter. Adam Lisagor tartly predicted that outcome right after Peach debuted:

I just joined Peach. Did you see that thing on Peach? Only teens use Peach these days. Nobody uses Peach anymore. Oh my god, remember Peach?

— Adam Lisagor (@adamlisagor) January 9, 2016

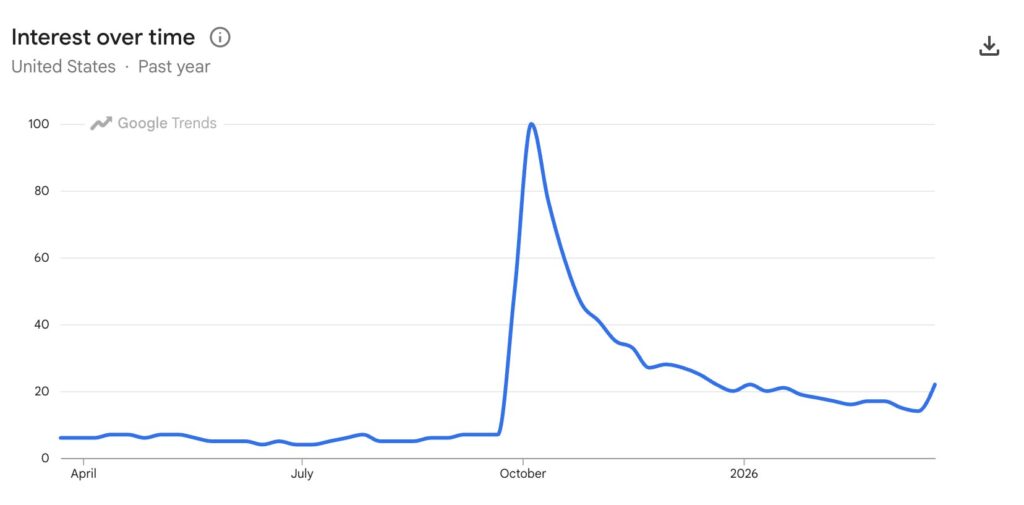

I’m reminded of this upon hearing that OpenAI has bailed out on Sora, which they launched just a few months ago. In a way I’m not surprised—check out how interest in the tech spiked & then rapidly cratered—except that just a couple of months ago Disney signed a billion-dollar deal to use it. ¯\_(ツ)_/¯

Oh Word, never change… 🙂 (I mean, absolutely do change, but you won’t, so—shine on, you crazy runtime.)

Cuando mueves una imagen en Microsoft Word. pic.twitter.com/0jjdsoAaEw

— Ross_co_Jones (@anonimo_jones) March 23, 2026

When can we get this (or equivalent) into Photoshop??

Okay it seems like @LumaLabsAI new uni-1 model is actually on a category of its own

You can give it one complex composition and its able to extract each layer as a new image generation

Are there any other models that can do this? https://t.co/COXdUvX4id pic.twitter.com/3w5quKoiFX

— Lucas Crespo (@lucas__crespo) March 23, 2026

On a conceptually (though not necessarily technically) related note, the LICA dataset may help model makers train layered generation:

Much of today’s AI-generated graphic designs look like slop because there is a lack of high-quality, open datasets. This space is a cluster of walled gardens (@figma , @canva , @Adobe ). We’ve built one of the largest graphic design dataset, 1.5 million compositions spanning… pic.twitter.com/YauAe7ugiD

— Priyaa (@pritopian) March 20, 2026

Speaking of spinning right ’round, check this out:

We just released Rotate Object in Photoshop (beta)

You can now rotate 2D images!

Then use Harmonize to add light and shadows, to blend it perfectly with the rest of the scene.

It’s like Turntable in Illustrator, but instead of vectors, it’s pixels in Photoshop! pic.twitter.com/85bvBIjCB9

— Kris Kashtanova (@icreatelife) March 12, 2026

Check out another view, from Paul Trani:

Game changer for compositors! Rotate an image as if it’s a 3D object in the #Photoshop beta! Interested?? pic.twitter.com/RUgsZZup7u

— Paul Trani (@paultrani) March 12, 2026

Five years ago, I spent an afternoon with a buddy watching Disco Diffusion resolve a weird, blurry, but ultimately delightful scene over the course of 15 minutes. Now Runway & NVIDIA are previewing generation that’s a mere ~90,000x faster than that. Ludicrous speed, go!!

A breakthrough in real-time video generation.

As a research preview developed with @NVIDIA and shared at @NVIDIAGTC this week, we trained a new real-time video model running on Vera Rubin. HD videos generate instantly, with time-to-first-frame under 100ms. Unlocking an entirely… pic.twitter.com/juafjvk0wm

— Runway (@runwayml) March 18, 2026

I always appreciate getting a peek into the incredible effort & craftsmanship that go into a production like this. Forget special effects: the physical grit on display here can’t be faked.

Now throw your shoulders back and go effin’ nuts. 😀

And for some more blog-appropriate content: Here are some fun pics & vids my son Henry & I captured on Saturday during SF’s wonderfully diverse & quirky St. Patrick’s Day parade:

Bonus: here’s a gallery of Irish wolfhounds, if you’re into that kind of thing. I couldn’t quite get these good boys to align like Cerberus, so I resorted to telling Gemini my hopes & dreams—as one does.

An AI paradox: as models get vastly more complex, interfaces can get vastly simpler. We can make computers conform to our reality—not the other way around.

Steve Jobs described exactly this evolution all the way back in 1981:

Structuring your prompt well turns out to be key in avoiding garbled text. As the presenter says, “It’s not about writing more. It’s about writing in the right order.” Check out this brief overview.

In this tutorial, you’ll see how to use Nano Banana Pro and Kling 3.0 Omni together to solve one of the most common pain points in AI product video: text that blurs, warps, or drifts mid-motion. We’ll walk through a practical workflow for maintaining legibility and visual consistency in product shots, so your labels, logos, and copy stay clean from the first frame to the last.

Hey, remember the pandemic? We sure made some impulse buys then, didn’t we?

For me it was Insta360’s bizarre, modular 360º camera plus the elaborate mounting kit that promised to strap its shards onto the top & bottom of my DJI Mavic, enabling some magical, drone-less captures. Suffice it to say the thing was a complete POS—dysfunctional even as a handheld action cam, much less as a bunch of theoretically interconnected pieces thousands of feet in the air.

And yet… who doesn’t love the promise of capturing immersive footage that enables crazy post-processing camera moves? Insta’s on it, releasing their first 360º drone, the Antigravity A1:

Some cool details:

With Antigravity’s proprietary FreeMotion technology, the drone — together with the Vision goggles and Grip controller — enables an immersive flying experience that feels both natural and intuitive. Pilots can fly in one direction while looking in another. This level of immersion enables more freedom to explore. The 360 immersion doesn’t end just because the drone lands — recorded footage can be viewed in 360 over and over again, letting users discover new angles every time they watch.

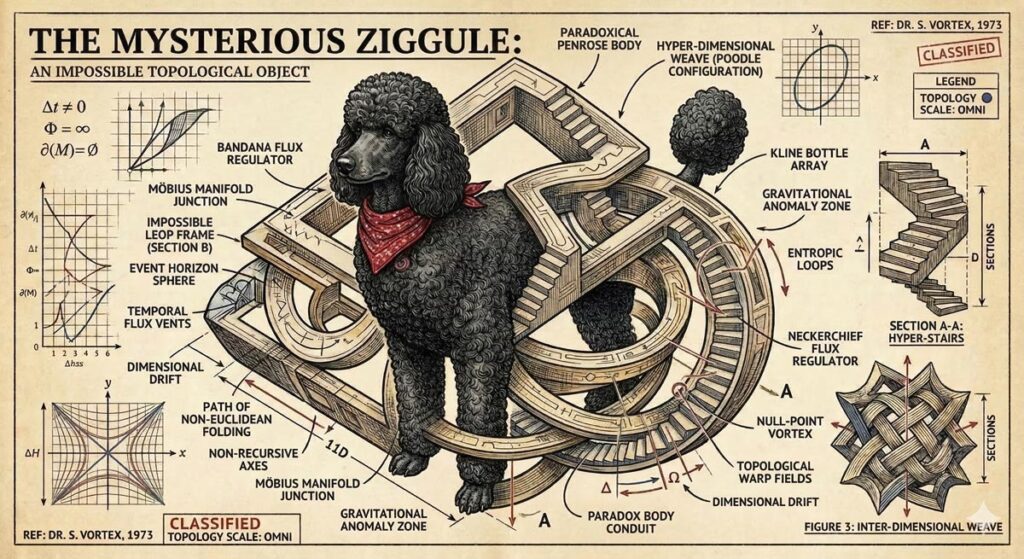

Long dog walks are for nothing if not visualizing whatever silliness pops into my head—which today happened to be our puppy Ziggy becoming an impossible object called a “Ziggule.”

I shared this with my cousin Alicia, who does a tremendous amount of work sheltering & rescuing dogs in Austin, and she requested a portrait of their current foster pooch (Tesseract). I was of course all too happy to oblige:

As it happens, folks at Google have had the same idea, and they’ve been putting Nano Banana to work helping zhuzh up pics of shelter pets in hopes of helping them find their forever homes. Let’s hear it for using AI & old-fashioned human creativity for good!

Photos play a big role in pet adoption.

We’ve teamed up with shelters across the country to give rescue pets glamorous headshots that show off their personalities, made with Nano Banana Pro.

Take a look below and contact our partner shelters for adoption inquiries pic.twitter.com/Lh565trjgR

— Google Gemini (@GeminiApp) January 21, 2026

Let’s do this, gang—yip yip. 🙂

As you’ve likely heard me say, I’ve gotten psyched up too many times about AI video-editing tech that fell short of its ambitions—but I’m hoping that this work from Adobe & Harvard collaborators can deliver what it describes:

We present Vidmento, an interactive video authoring tool that expands initial materials and ideas into compelling video stories through blending captured and generative media. To preserve narrative continuity and creative intent, Vidmento generates contextual clips that align with the user’s existing footage and story.

Per the site, Vidmento should enable:

The older I get, the harder it is to get the Kids These Days™ to grok just what a road-to-Damascus moment the arrival of the Mac presented. I flap my arms like some conspiracy nut at his cork board, trying in vain to convey the idea that in the pre-Mac days, personal computer “art” consisted of pecking out some green ASCII blocks on an Apple ][. Okay, grandpa, let’s get you to bed…

Anyway, predating even me (heh) is this glimpse of how computer animation was painstakingly eeked out via data tape (!) back in 1971.

View this post on Instagram

[Via Uri Ar]

“Every man got to have a code…” 🙂

Behold, A Day in the Life of an Ensh*ttificator:

Oh boy. 🙂 (But, like, holy crap—compare this to the hot garbage we were getting less than a year ago!)

Here’s the video containing those images. Overall it’s kinda good—kinda! pic.twitter.com/KOFBevbebU

— John Nack (@jnack) March 6, 2026

Among the misbegotten “Oh, everyone will love this—but rarely will anyone actually use it” AR demos of 2017 (right alongside “See whether this toaster fits on my counter!”), imagining restaurants plopping a 3D model onto your plate was always a banger. Leaving aside whether anyone would actually want or value that experience, the cost of realistically modeling dishes was prohibitive.

This new tech at least promises to take the grunt work out of model creation, turning a single photo into an AR-ready 3D asset (give or take a tine or two ;-)):

AR GenAI by AR Code is transforming the food industry. Creating an AR experience for a dish can now start with a single photo.

As shown in the video, a single dessert photo is converted into an AR-ready 3D model with realistic textures and depth. AR Code SaaS then instantly… pic.twitter.com/s1H5do1UUf

— Maxime Maisonneuve (@maximemaisonneu) January 25, 2026

“Wow, that’s some really sharp After Effects work,” I thought last year, when my wife showed me some animation her Airbnb colleague had created. But nope—the work came straight out of Canva.

Not content to chill with their surprisingly capable foundation, Canva is continuing to build out the “Creative Operating System” and has announced the acquisition of up-and-coming 2D animation tool Cavalry:

In their blog post they seem pretty adamant that the acquisition won’t result in dumbing down the core app:

Built for professional motion designers

Cavalry earned its place in the motion design world by doing something different. Its procedural, systems-based approach prioritises flexibility, repeatability, and performance. It wasn’t built as a simplified alternative; it was built specifically for professional motion designers and the complex workflows they rely on. That professional focus remains central.

We’ve invested in Cavalry because of its depth as a professional-grade motion tool. The goal isn’t to simplify what makes it powerful, but to support and strengthen it. Professional motion design demands precision, flexibility, and tools that can scale across complex projects.

Much as with their acquisition of Affinity, however, I’d fully expect Canva to integrate underlying tech into the core design platform, radically simplifying the interface to it—including by providing agentic and chat-based touchpoints.

As with the myriad node-based systems that sprung up last year, I wouldn’t expect most people to ever see or touch the underlying data structures. Rather, what’s essential is that the main tool can understand & modify them, so that it can deliver brilliant results at scale. That necessitates a very approachable, and totally complementary, UX.

I’m excited to see what’s next!

I try not to curse on this blog, doing so maybe a dozen times in 20+ (!!) years of posting. But circa 2013-2017, when I saw what felt like uncritical praise for Adobe’s voice-driven editing prototypes, I called bullshit.

The high-level concept was fine, but the tech at the time struck me as the worst of both worlds: the imprecision of language (e.g. how does a normal person know the term “saturation,” and how does an expert describe exactly how much they want?) combined with the fragility of traditional selection & adjustment algorithms.

Now, however, generative tech can indeed interpret our language & effect changes—and in the case of Krea’s new realtime mode, in a highly responsive way:

introducing Voice Mode.

speak as you draw and get changes in real-time.

available now in Krea iPad. pic.twitter.com/c6mHHjupmW

— KREA AI (@krea_ai) March 2, 2026

Whether or not voice per se becomes a popular modality here, closing the gap between idea & visual is just so seductive. To emphasize a previously made point:

We simply have not started rethinking interactions from the grounds up.

So many possibilities wide open when you think of human – AI in micro feedback loops vs automation alone or classic back and forth. https://t.co/iVKb02SbdU

— tuhin (@tuhin) February 18, 2026

I got into the Mac scene just a touch too late to have interacted with Aldus (acquired by Adobe in 1994), and I’m sorry not to have known the late Paul Brainerd, who passed away a couple of weeks ago. To mark the occasion, some friends have been resharing this video, created when the company became part of the Big Red A. It’s fun to see a few familiar faces & to remember the tech vibe of those early days:

Engine Engine, Number Five…

I had no idea that the ol’ girl had it in (er, on) her—but this is too odd & thus interesting not to pass along:

Meanwhile, speaking of odd: Having just visited the Mojave aircraft boneyard (see pics) and Spaceport, from which the weird creations of Burt Rutan & co. operate, I couldn’t resist trying this silliness:

I asked Nano Banana to imagine legendary aircraft designer Burt Rutan rocking the sort of canard wings he loves including on planes.

It… made some attempts. 🙂 pic.twitter.com/VCq1H6ldzU

— John Nack (@jnack) February 22, 2026

I couldn’t have contrived a better example of the power & pitfalls of generative imaging if I tried.

Here’s a pretty crummy cell phone picture I took yesterday from a moving train & then enhanced with a single prompt using Gemini. The results are incredible—if you don’t really care about the exact capacity of your jumbo jet! 🙂

The current state of AI-driven editing drives home the wisdom of that old Russian staying, “Trust… but verify.”

This also highlights the subtle treachery of AI photography: look how it shortened the 747! pic.twitter.com/Yga5oo1D0B

— John Nack (@jnack) February 27, 2026

See also my previously shared example, in which Nano Banana quietly upgraded this propeller-driven plane into a jet:

Testing fence removal on my son’s photo using @NanoBanana, @ChatGPTapp, and @bfl_ml.

They’re all impressive, but Nano tried to put jet engines on this prop plane, so I’m giving this round to ChatGPT. pic.twitter.com/DOvZQLT5H5

— John Nack (@jnack) December 23, 2025

When it rains, it pours: No sooner did I post about text->vector than I saw two new entrants in that space. The new Quiver AI is claimed to have “solved vector design with AI”:

Introducing @QuiverAI, a new AI lab and product company focused on frontier vector design.

We’ve raised an $8.3M seed round led by @a16z, with support from amazing angels and investors.

Our first model, Arrow-1.0, generates SVGs from images and text. It’s available now in… pic.twitter.com/mLoeM2UpGf

— Joan Rodriguez (@joanrod_ai) February 25, 2026

Here’s my first quick test, in which Quiver & Illustrator utterly smoke direct chat->vector output in Gemini & ChatGPT:

Testing text->vector in the new @QuiverAI vs. Adobe Illustrator and (yikes!) Gemini and ChatGPT. (Prompt: “A three-quarter view of a silver 1990 Mazda Miata.”) pic.twitter.com/MjTuFYLGQ3

— John Nack (@jnack) February 26, 2026

Meanwhile, check out what Recraft produced:

Impressive results from @recraftai! https://t.co/VbukNz0rtn pic.twitter.com/vaIN4ySQ4H

— John Nack (@jnack) February 26, 2026

Elsewhere, Hero Studio promises great image->SVG conversion. I’ve applied for access & am eager to take it for a spin:

You can now bring your images to life, just upload any image and it turns it into a clean and precise SVG. we’re using a custom model specifically trained for SVG recognition and generation. the results are insane pic.twitter.com/s6e4tJ4IWm

— Junior García (@jrgarciadev) February 25, 2026

When we launched Firefly three years ago (!), we talked up prompt-based vector creation. When the feature later arrived in Illustrator, it was really text-to-image-to-tracing. That could be fine, actually, provided that the conversion process did some smart things around segmenting the image, moving objects onto their own layers, filling holes, and then harmoniously vectorizing the results. I’m not sure whether Adobe actually got around to shipping that support.

In any case, Recraft now promises create vector creation directly from prompts:

V4 Vector is built for real design workflows.

Clean path structure.

SVG export.

Print-ready (300 DPI, CMYK).Generate → export → refine pic.twitter.com/XenDDSTjmd

— Recraft (@recraftai) February 23, 2026

Meanwhile Gemini promises SVG creation right out of the box. My previous attempts to use it produced results that were, um, impressionistic…

Nano Banana->SVG results can be… unique. 🙂 https://t.co/FuEgYZiL5T pic.twitter.com/9BwSqmsLmT

— John Nack (@jnack) November 20, 2025

…and based on what they’re showing vis-à-vis recent updates, I haven’t been in a hurry to try again:

“Generate an SVG of a pelican riding a car in France with a cat sitting beside it. Background has Eiffel tower.” pic.twitter.com/RjCnte4cky

— Oriol Vinyals (@OriolVinyalsML) February 19, 2026

My longtime Adobe friend Adam Pratt founded the media digitization & preservation company Chaos to Memories a few years ago, and now he and his team have really comprehensive overview of the various formats one may encounter:

Every photo project should start with gathering all these materials because it helps us grasp the scope of your project and work efficiently. To help you identify the different types in your collection, many common photo, video, audio, and digital formats are explained in the list below.

I’ve really enjoyed collaborating with Black Forest Labs, the brain-geniuses behind Flux (and before that, Stable Diffusion). They’re looking for a creative technologist to join their team. Here’s a bit of the job listing in case the ideal candidate might be you or someone you know:

BFL’s models need someone who knows them inside out – not just what they can do today, but what nobody’s tried yet. This role sits at the intersection of creative excellence, deep model knowledge, and go-to-market impact. You’ll create the work that makes people realize what’s possible with generative media – original pieces, experiments, and creative assets that set the standard for what FLUX can do and show it to the world

— Create original creative work that pushes FLUX to its limits – experiments, visual explorations, and pieces that show what’s possible before anyone else figures it out

— Collaborate with the research and product teams from the start of training/product development to understand the core strengths of each new model/product and create assets that amplify and showcase these. You will also provide feedback to those teams throughout the development process on what needs to improve.

Former Apple designer Tuhin Kumar, who recently logged three years at Luma AI, makes a great point here:

We simply have not started rethinking interactions from the grounds up.

So many possibilities wide open when you think of human – AI in micro feedback loops vs automation alone or classic back and forth. https://t.co/iVKb02SbdU

— tuhin (@tuhin) February 18, 2026

To the extent I give Adobe gentle but unending grief about their near-total absence from the world of UI innovation, this is the kind of thing I have in mind. What if any layer in Photoshop—or any shape in Illustrator—could have realtime-rendering generative parameters attached?

Like, where are they? Don’t they want to lead? (It’s a genuine question: maybe the strategy is just to let everyone else try things, and then to finally follow along at scale.) And who knows, maybe certain folks are presently beavering away on secret awesome things. Maybe… I will continue hoping so!

Supporting my MiniMe Henry’s burgeoning interest in photography remains a great joy. Having recently captured the Super Bowl flyover with him (see previous), I prayed that Monday’s torrential downpour in LA just might give us some spectacular skies—and, what do you know, it did! Check out our gallery (selects below), featuring one seriously exuberant kid!

I’ve also been enjoying Hen’s great eye for reflections, put to good use during our recent visit to the USS Hornet:

Hey, I’ve got a fun, quick question, said with love: where the hell is Adobe in all this…?

today, we’re announcing the acquisition of @wand_app and the release of our new iPad app.

Krea iPad integrates the best of both worlds: native iOS feel with custom brushes and real-time AI.

download it now pic.twitter.com/VNCf8eB9eK

— KREA AI (@krea_ai) February 12, 2026

It’s hard to believe that when I dropped by Google in 2022, arguing vociferously that we work together to put Imagen into Photoshop, they yawned & said, “Can you show up with nine figures?”—and now they’re spending eight figures on a 60-second ad to promote the evolved version of that tech. Funny ol’ world…

Real or AI rendered? Who even knows anymore, but either way these depictions are super well done. They even got the triumphal Riley Mills stomp!

View this post on Instagram

[Via Chris Davis]

MiniMe on the lens + Dad in Lightroom/Photoshop, making the dream work. 🙂

Bad To The B-ONE

Capturing yesterday’s #SuperBowl with MiniMe #B1 #F15 #F18 #F35 pic.twitter.com/FB96CmSUW3

— John Nack (@jnack) February 10, 2026

Check out our gallery for full-res shots plus a few behind-the-scenes pics. BTW: Can you tell which clouds were really there and which ones came via Photoshop’s Sky Replacement feature? If not, then the feature and I have done our jobs!

And peep this incredibly smooth camerawork that paired the flyover with the home of the brave:

View this post on Instagram

Through insanely good timing, I caught Friday’s practice flyover as the jets headed up to Levi’s Stadium:

Crazy luck heading home in my neighborhood tonight!

B-1, two F-15s, two F/A-18s, and two F-35s. Practicing for the Super Bowl flyover on Sunday. pic.twitter.com/X73Lv7aXJ6

— John Nack (@jnack) February 7, 2026

Right now my MiniMe & I are getting set to head up to the Bayshore Trail with proper cameras, as we hope to catch the real event at 3:30 local time.

Meanwhile, I’ve been enjoying this deep dive video (courtesy of our Photoshop teammate Sagar Pathak, who’s gotten just insane access in past years). It features interviews with multiple pilots, producers, and more as they explain the challenges of safely putting eight cross-service aircraft into a tight formation over hundreds of thousands of people—and in front of a hundred+ million viewers. I think you’ll dig it.

A couple of weeks ago I mentioned a cool, simple UI for changing camera angles using the Qwen imaging model. Along related lines, here’s an interface for relighting images:

Qwen-Image-Edit-3D-Lighting-Control app, featuring 8× horizontal and 3× elevational positions for precise 3D multi-angle lighting control. It enables studio-level lighting with fast Qwen Image Edit inference, paired with Multi-Angle-Lighting adapter. Try it now on @huggingface. pic.twitter.com/b3UrELE6Cn

— Prithiv Sakthi (@prithivMLmods) February 4, 2026