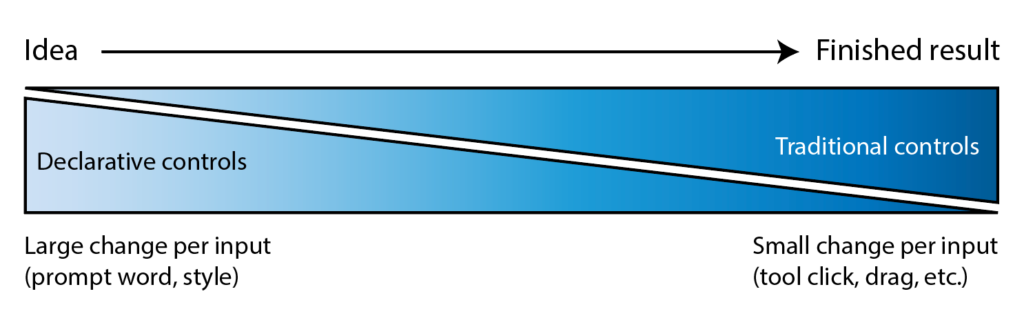

I’ve long quoted James Ratliff, the super sharp designer behind Adobe’s Project Graph (who’s recently decamped to Figma), in nicely phrasing how the process of generating & refining ideas generally starts broad/declarative (searching, prompting) and moves towards fine-grained methods (selecting, moving, etc.):

I see an increasing number of tool & model creators mixing modalities—even in the Gemini Super Bowl ad featuring a mom & daughter drawing a simple circle to show where they’d like to add a dog bed.

I’m eager to check out Lovart’s take on the possibilities, especially for animation:

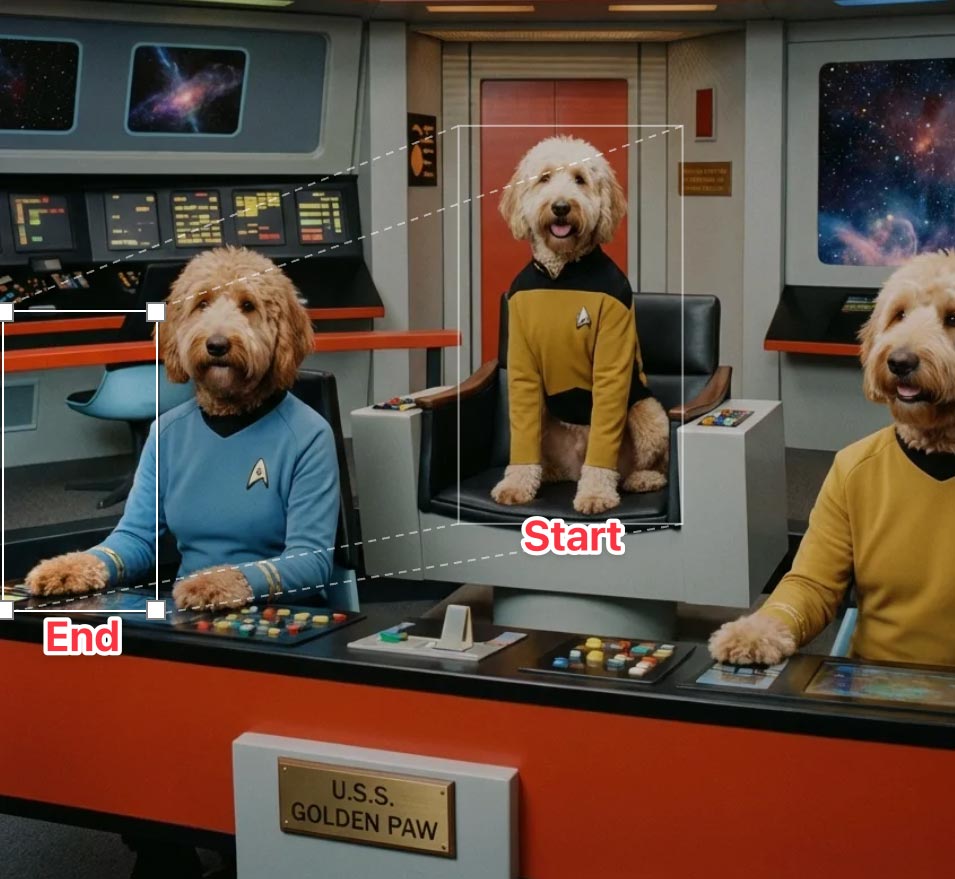

⚡️ New on Lovart: Move Object

→ Select any object with rectangular or lasso tool

→ Move it wherever you want

→ Prompt optional modifications

→ One clean, consistent imageNo masks. No layers. No re-roll. pic.twitter.com/Sw800icnsu

— LovartAI (@lovart_ai) March 25, 2026

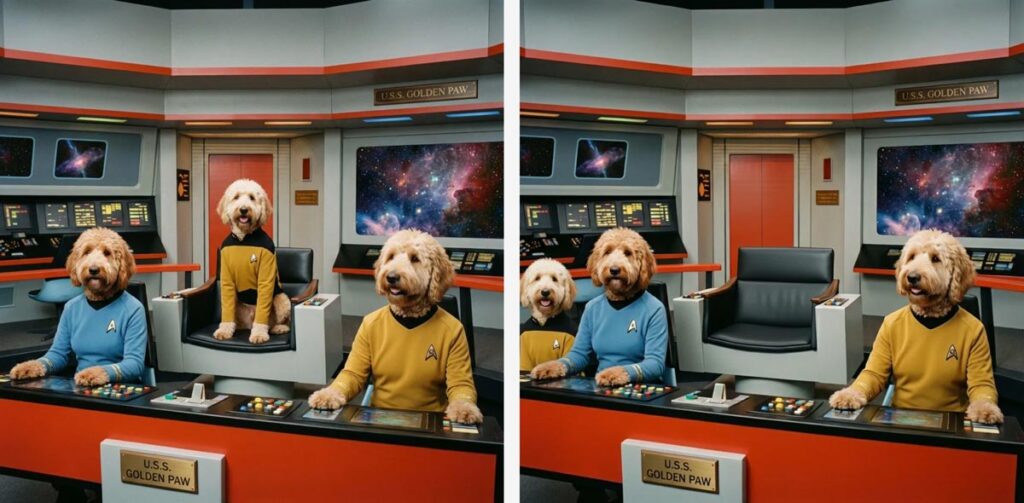

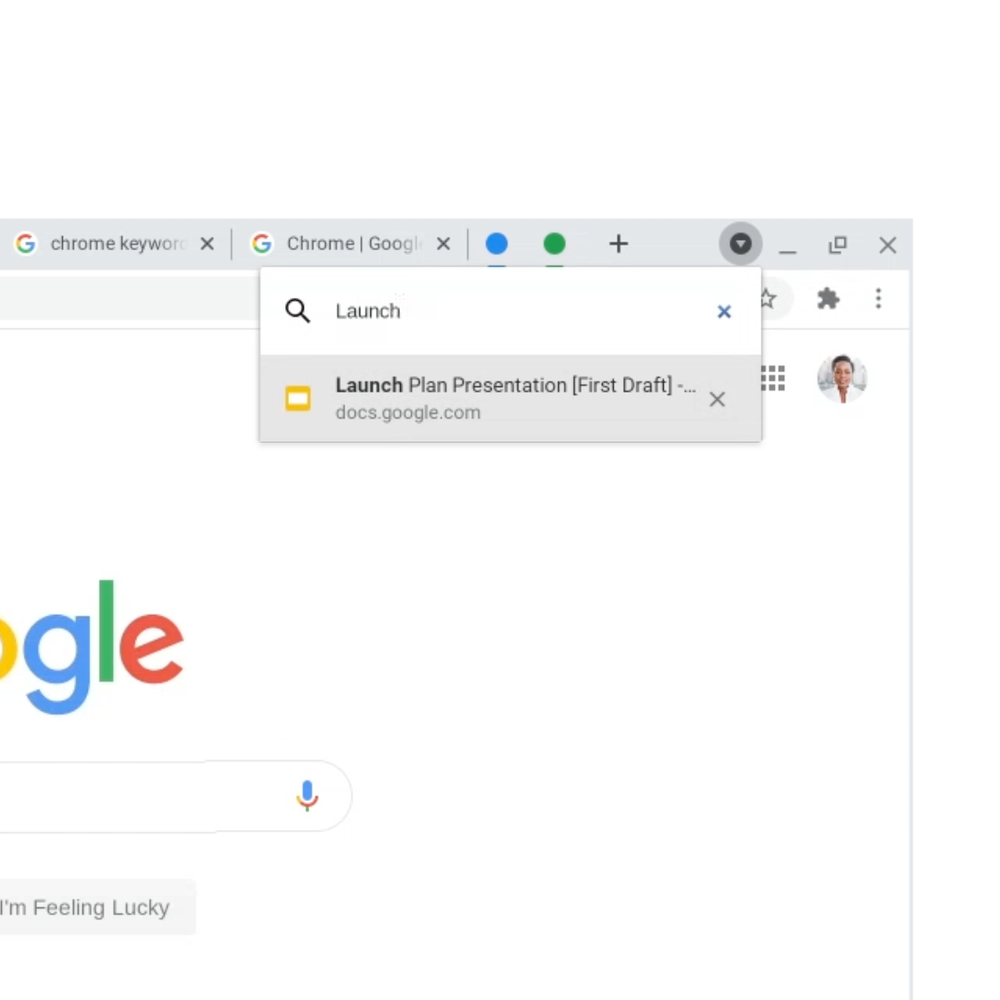

Update: Here’s a look at the UI, in which you can move & scale the selection rectangle, as well as the before & after images: