I had no idea! Merci, Monsieur Pierre.

Category Archives: Illustration

The scarily beautiful animation of Sincitium

Side note: “Macrófago” is 100% the best word I’ve learned all week.

Sincitium is finally here.

We are pleased to present our latest piece: a concept trailer created specifically for the @runwayml Big Pitch Contest. For this project, we wanted to explore a completely different aesthetic from our usual studio style, and this film is the result of… pic.twitter.com/FHKkZWjjJg

— Contanimation (@Delachica_) May 4, 2026

Sketching to control Nano Banana in Photoshop

Just like it says on the tin. Check it out:

Photoshop is the most powerful way to use Nano Banana 2

In photoshop you can sketch and control exactly where everything goes in your nano banana 2 generation

Here’s how I’ve been using it: #AdobeFireflyAmbassadors #Ad #AdobePartnerModels pic.twitter.com/c7YzV55JNS

— Allen T. (@Mr_AllenT) April 6, 2026

“LooseRoPE” promises super intuitive illustration & compositing

Man, it must be nearly 20 years ago that we started envisioning drag-and-drop-simple composition and compositing in Photoshop—back when gradient-domain painting & blending was the emerging hotness. After plenty of false starts, could these simple interaction patterns finally become mainstream? Maybe! I must know more of this witchcraft:

Do you like image editing? Don’t like prompt engineering? Want to see what a giraffe-duck hybrid looks like?

If you answered yes at least once, you may like our new #SIGGRAPH2026 paper: LooseRoPE, which presents a new, prompt-free way to edit images using simple visual cues pic.twitter.com/JMzMDHJ9wE— Etai Sella (@etai_sella) April 23, 2026

You could have a steam train…

Despite—or perhaps because of—growing up without MTV (I know, the Gen X horror...), I’ve always had a real fascination with the video for Peter Gabriel’s Sledgehammer. Check out its rad zoetrope picture disc incarnation:

The zoetrope picture disc 12” of Sledgehammer is out this Saturday as part of #RecordStoreDay

Designed by Drew Tetz and Marc Bessant.

Please visit the Record Store Day website to check out the full list of releases and also visit your local record store to see what they plan to… pic.twitter.com/arJfTYLk4k

— Peter Gabriel (@itspetergabriel) April 13, 2026

And, because why not, it’s Friday & you deserve nice things, here’s the original vid:

“Sketch to Vector” comes to Illustrator

Nano Banana + Adobe tech FTW! Here’s a quick look:

And here’s a deeper dive:

Using AI to save pets

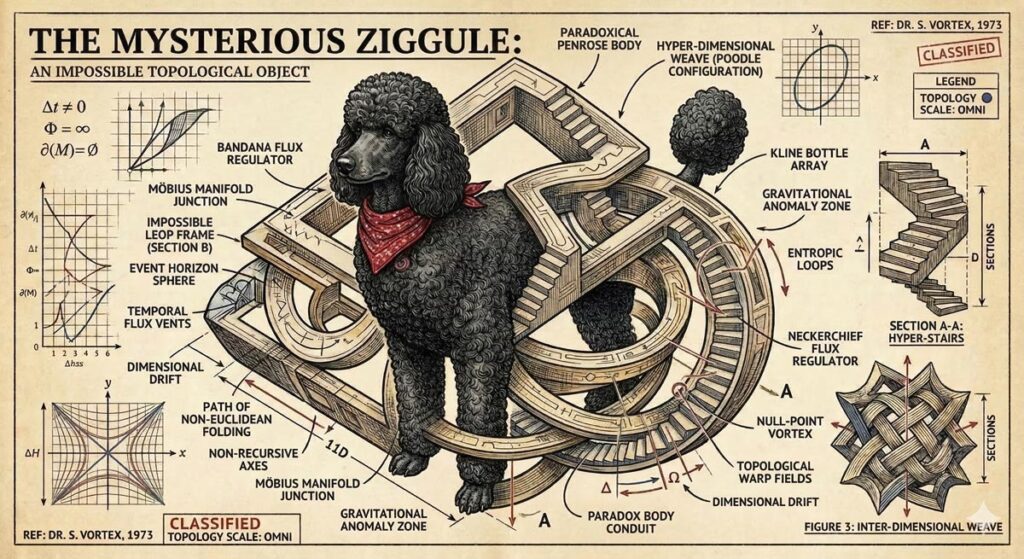

Long dog walks are for nothing if not visualizing whatever silliness pops into my head—which today happened to be our puppy Ziggy becoming an impossible object called a “Ziggule.”

I shared this with my cousin Alicia, who does a tremendous amount of work sheltering & rescuing dogs in Austin, and she requested a portrait of their current foster pooch (Tesseract). I was of course all too happy to oblige:

As it happens, folks at Google have had the same idea, and they’ve been putting Nano Banana to work helping zhuzh up pics of shelter pets in hopes of helping them find their forever homes. Let’s hear it for using AI & old-fashioned human creativity for good!

Photos play a big role in pet adoption.

We’ve teamed up with shelters across the country to give rescue pets glamorous headshots that show off their personalities, made with Nano Banana Pro.

Take a look below and contact our partner shelters for adoption inquiries pic.twitter.com/Lh565trjgR

— Google Gemini (@GeminiApp) January 21, 2026

Computer animation in 1971 (!)

The older I get, the harder it is to get the Kids These Days™ to grok just what a road-to-Damascus moment the arrival of the Mac presented. I flap my arms like some conspiracy nut at his cork board, trying in vain to convey the idea that in the pre-Mac days, personal computer “art” consisted of pecking out some green ASCII blocks on an Apple ][. Okay, grandpa, let’s get you to bed…

Anyway, predating even me (heh) is this glimpse of how computer animation was painstakingly eeked out via data tape (!) back in 1971.

View this post on Instagram

[Via Uri Ar]

Speak it -> See it, with Krea’s new voice mode

I try not to curse on this blog, doing so maybe a dozen times in 20+ (!!) years of posting. But circa 2013-2017, when I saw what felt like uncritical praise for Adobe’s voice-driven editing prototypes, I called bullshit.

The high-level concept was fine, but the tech at the time struck me as the worst of both worlds: the imprecision of language (e.g. how does a normal person know the term “saturation,” and how does an expert describe exactly how much they want?) combined with the fragility of traditional selection & adjustment algorithms.

Now, however, generative tech can indeed interpret our language & effect changes—and in the case of Krea’s new realtime mode, in a highly responsive way:

introducing Voice Mode.

speak as you draw and get changes in real-time.

available now in Krea iPad. pic.twitter.com/c6mHHjupmW

— KREA AI (@krea_ai) March 2, 2026

Whether or not voice per se becomes a popular modality here, closing the gap between idea & visual is just so seductive. To emphasize a previously made point:

We simply have not started rethinking interactions from the grounds up.

So many possibilities wide open when you think of human – AI in micro feedback loops vs automation alone or classic back and forth. https://t.co/iVKb02SbdU

— tuhin (@tuhin) February 18, 2026

AI + SVG: Vector all the things!

When it rains, it pours: No sooner did I post about text->vector than I saw two new entrants in that space. The new Quiver AI is claimed to have “solved vector design with AI”:

Introducing @QuiverAI, a new AI lab and product company focused on frontier vector design.

We’ve raised an $8.3M seed round led by @a16z, with support from amazing angels and investors.

Our first model, Arrow-1.0, generates SVGs from images and text. It’s available now in… pic.twitter.com/mLoeM2UpGf

— Joan Rodriguez (@joanrod_ai) February 25, 2026

Here’s my first quick test, in which Quiver & Illustrator utterly smoke direct chat->vector output in Gemini & ChatGPT:

Testing text->vector in the new @QuiverAI vs. Adobe Illustrator and (yikes!) Gemini and ChatGPT. (Prompt: “A three-quarter view of a silver 1990 Mazda Miata.”) pic.twitter.com/MjTuFYLGQ3

— John Nack (@jnack) February 26, 2026

Meanwhile, check out what Recraft produced:

Impressive results from @recraftai! https://t.co/VbukNz0rtn pic.twitter.com/vaIN4ySQ4H

— John Nack (@jnack) February 26, 2026

Elsewhere, Hero Studio promises great image->SVG conversion. I’ve applied for access & am eager to take it for a spin:

You can now bring your images to life, just upload any image and it turns it into a clean and precise SVG. we’re using a custom model specifically trained for SVG recognition and generation. the results are insane pic.twitter.com/s6e4tJ4IWm

— Junior García (@jrgarciadev) February 25, 2026

Can AI finally generate useful vectors?

When we launched Firefly three years ago (!), we talked up prompt-based vector creation. When the feature later arrived in Illustrator, it was really text-to-image-to-tracing. That could be fine, actually, provided that the conversion process did some smart things around segmenting the image, moving objects onto their own layers, filling holes, and then harmoniously vectorizing the results. I’m not sure whether Adobe actually got around to shipping that support.

In any case, Recraft now promises create vector creation directly from prompts:

V4 Vector is built for real design workflows.

Clean path structure.

SVG export.

Print-ready (300 DPI, CMYK).Generate → export → refine pic.twitter.com/XenDDSTjmd

— Recraft (@recraftai) February 23, 2026

Meanwhile Gemini promises SVG creation right out of the box. My previous attempts to use it produced results that were, um, impressionistic…

Nano Banana->SVG results can be… unique. 🙂 https://t.co/FuEgYZiL5T pic.twitter.com/9BwSqmsLmT

— John Nack (@jnack) November 20, 2025

…and based on what they’re showing vis-à-vis recent updates, I haven’t been in a hurry to try again:

“Generate an SVG of a pelican riding a car in France with a cat sitting beside it. Background has Eiffel tower.” pic.twitter.com/RjCnte4cky

— Oriol Vinyals (@OriolVinyalsML) February 19, 2026

Krea brings realtime generative painting to iPad

Hey, I’ve got a fun, quick question, said with love: where the hell is Adobe in all this…?

today, we’re announcing the acquisition of @wand_app and the release of our new iPad app.

Krea iPad integrates the best of both worlds: native iOS feel with custom brushes and real-time AI.

download it now pic.twitter.com/VNCf8eB9eK

— KREA AI (@krea_ai) February 12, 2026

Adobe vets launch AniStudio

My former colleagues Jue Wang & Chen Fang are making an impressive indie debut:

AniStudio exists because we believe animation deserves a future that’s faster, more accessible, and truly built for the AI era—not as an add-on, but from the ground up. This isn’t a finished story. It’s the first step of a new one, and we want to build it together with the people who care about animation the most.

Check it out:

Introducing https://t.co/zxqLkGyNDh: the first AI-native Animation platform.

We’re still cooking, so… Repost & comment to join the beta (FREE access).#AdobeAnimate #AniStudio pic.twitter.com/diPJV1p2CW

— AniStudio (@AniStudio_ai) February 4, 2026

AirDraw: Slick 3D drawing for Vision Pro

Check out this fun, physics-enabled prototype from Justin Ryan:

AirDraw on Apple Vision Pro is incredible when you toggle on physics.

Being able to interact with your 3D drawings makes them feel like they are actually in your room. pic.twitter.com/jFNf56f8eg

— Justin Ryan ᯅ (@justinryanio) January 27, 2026

Here’s an extended version of the demo:

The moment I switched on gravity was the moment everything changed.

Lines I had just drawn started to fall, swing, and collide like they were suddenly alive inside my room. A simple sketch became an object with weight. A doodle turned into something that could react back. It is one of those Vision Pro moments where you catch yourself smiling because it feels playful in a way you do not see coming.

Of course, Old Man Nack™ feels like being a little cautious here: Ten years ago (!) my kids were playing in Adobe’s long-deceased Project Dali…

…and five years ago Google bailed on the excellent Tilt Brush 3D painting app it acquired. ¯\_(ツ)_/¯

And yet, and yet, and yet… I Want To Believe. As I wrote back in 2015,

I always dreamed of giving Photoshop this kind of expressive painting power; hence my long & ultimately fruitless endeavor to incorporate Flash or HTML/WebGL as a layer type. Ah well. It all reminds me of this great old-ish commercial:

So, in the world of AI, and with spatial computing staying a dead parrot (just resting & pining for the fjords!), who knows what dreams may yet come?

When life gives you hospitalized lemons…

…you waste pass the time screwing around doing competitive AI model featuring the building’s baffling architecture…

Round 2 pic.twitter.com/zSGHVL6aPL

— John Nack (@jnack) December 20, 2025

…and sketchy chow:

More in-hospital @NanoBanana vs. ChatGPT testing:

“Please create a funny infographic showing a cutaway diagram for the world’s most dangerous hospital cuisine: chicken pot pie. It should show an illustration of me (attached) gazing in fear…” pic.twitter.com/txnuamvGVq

— John Nack (@jnack) December 20, 2025

Lucky & Charming

This season my alma mater has been rolling out sport-specific versions of the classic leprechaun logo, and when the new basketball version dropped today, I decided to have a little fun seeing how well Nano Banana could riff on the theme.

My quick take: It’s pretty great, though applying sequential turns may cause the style to drift farther from the original (more testing needed).

I dig it. Just for fun, I asked Google’s @NanoBanana to create more variations for other sports: pic.twitter.com/i3CBTr8bpp

— John Nack (@jnack) December 9, 2025

AI-powered catharsis

I can’t think of a more burn-worthy app than Concur (whose “value prop” to enterprises, I swear, includes the amount they’ll save when employees give up rather than actually get reimbursed).

That’s awesome!

Given my inability to get even a single expense reimbursed at Microsoft, plus similar struggles at Adobe, I hope you won’t mind if I get a little Daenerys-style catharsis on Concur (via @GeminiApp, natch). pic.twitter.com/128VExTDoS

— John Nack (@jnack) November 22, 2025

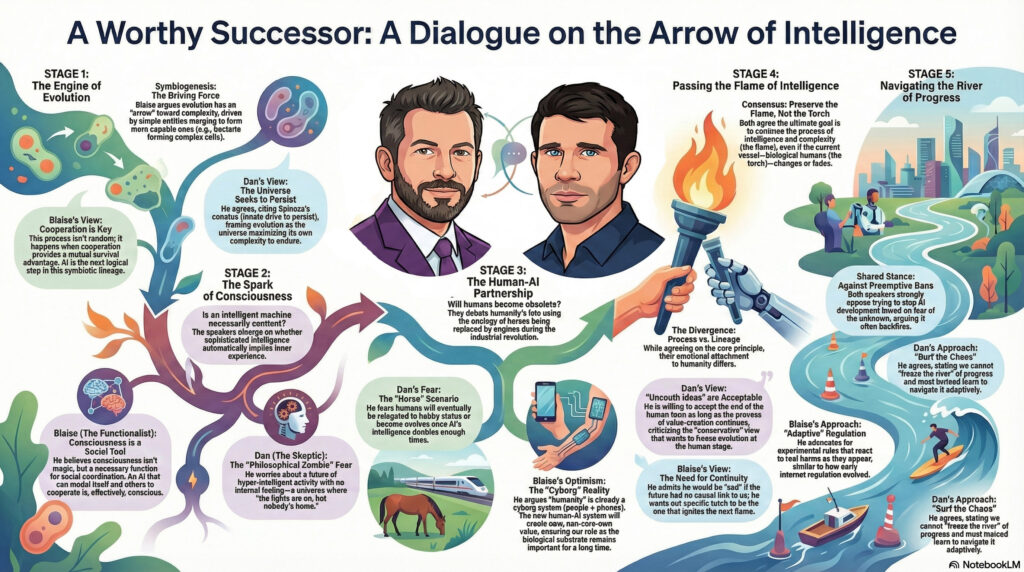

Visualizing conversations with Nano Banana

The ever thoughtful Blaise Agüera y Arcas (CTO of Technology & Society at Google) recently sat down for a conversation with the similarly deep-thinking Dan Faggella. I love that I was able to get Gemini to render a high-level view of the talk:

My workflow, FWIW:

- Use Gemini in Chrome to create a summary.

- Open it in Gemini & copy it to a Google Doc.

- Open the doc in NotebookLM & specify infographic creation preferences.

- Download image, open it in Gemini, and refine likenesses by uploading images of each speaker.

- Make minor tweaks in Photoshop to deal with the aspect ratio changing (a subtle & intermittent but annoying bug).

Here’s the stimulating chat itself:

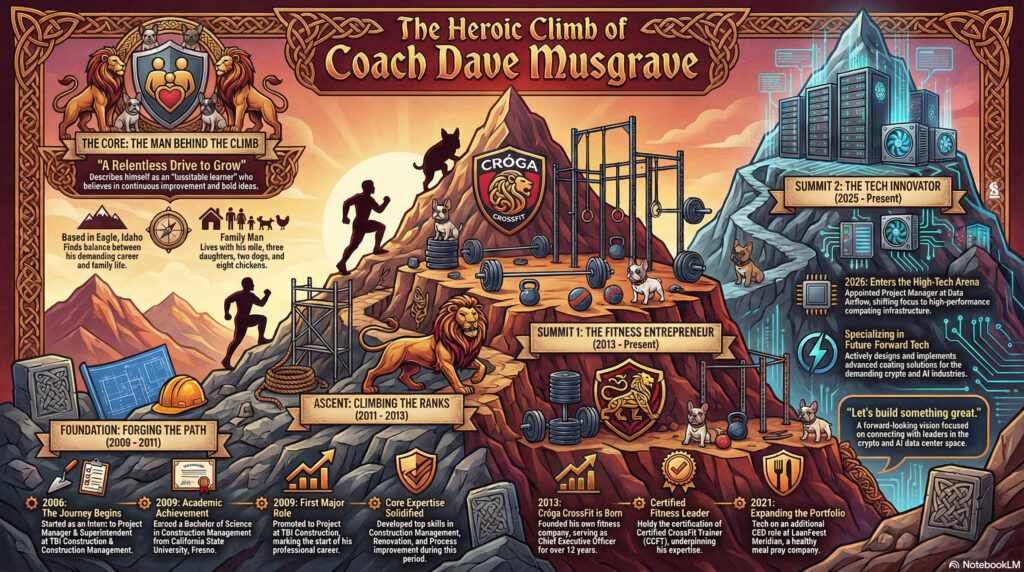

Need an ego boost? Show NotebookLM your résumé.

Wow—check out the infographic & video it made for me:

NotebookLM one-shotted this video based on the same source. Like, whoever this dude is, I’d hire him! pic.twitter.com/CNdyJUdF8D

— John Nack (@jnack) November 21, 2025

I feel like I’m gonna get at least briefly obsessed with doing this for friends—e.g. my coach Dave:

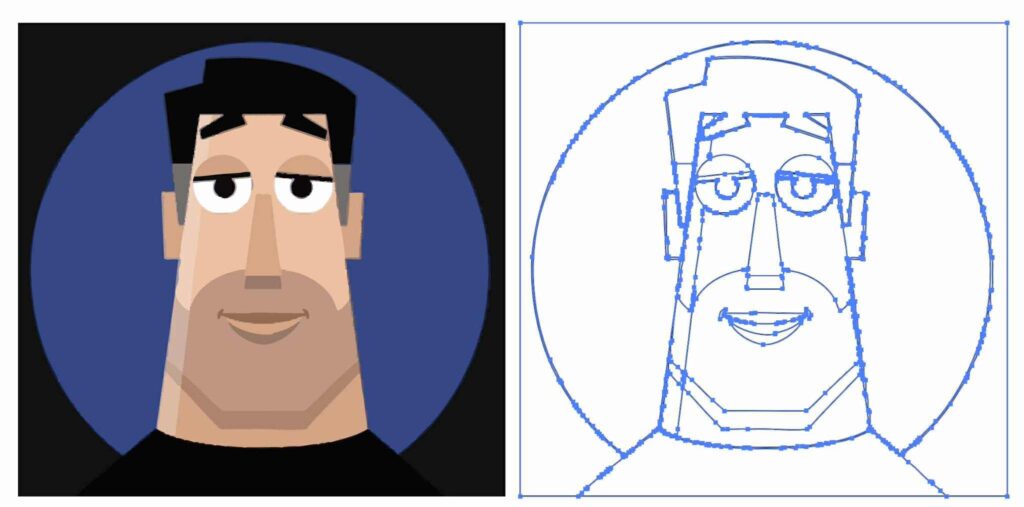

Gemini/Nano Banana promises SVG generation

Creating clean vectors has proven to be an elusive goal. Firefly in Illustrator still (to my knowledge) just generates bitmaps which then get vectorized. Therefore this tweet caught my attention:

Free-form SVG generation has always been an incredibly hard problem – a challenge I’ve worked on for two years. But with #Gemini3, everything has changed! Now, everyone is designer.

Proud of the amazing team behind breakthrough, and always excited for our future release! https://t.co/rlpUdgjY5Y pic.twitter.com/yeJG36lzKm

— Mu Cai (@MuCai7) November 19, 2025

In my very limited testing so far, however, results have been, well, impressionistic. 🙂

Here’s a direct comparison of my friend Kevin’s image (which I received as an image) vectorized via Image Trace (way more points than I’d like, but generally high fidelity), vs. the same one converted to SVG via Gemini(clean code/lines, but large deviation from the source drawing):

But hey, give it time. For now I love seeing the progress!

Flux hackathon provides perspective

The team at BFL is celebrating some of the most interesting, creative uses of the Flux model. Having helped bring the Vanishing Point tool to Photoshop, and always having been interested in building more such tech, this one caught my eye:

Best Overall Winner

Perspective Control using Vanishing Points (jschoormans)

Just like Renaissance artists who start with perspective grids, this Kontext LoRa lets you control the exact perspective point in AI-generated images. pic.twitter.com/phAY41KYdP— Black Forest Labs (@bfl_ml) October 1, 2025

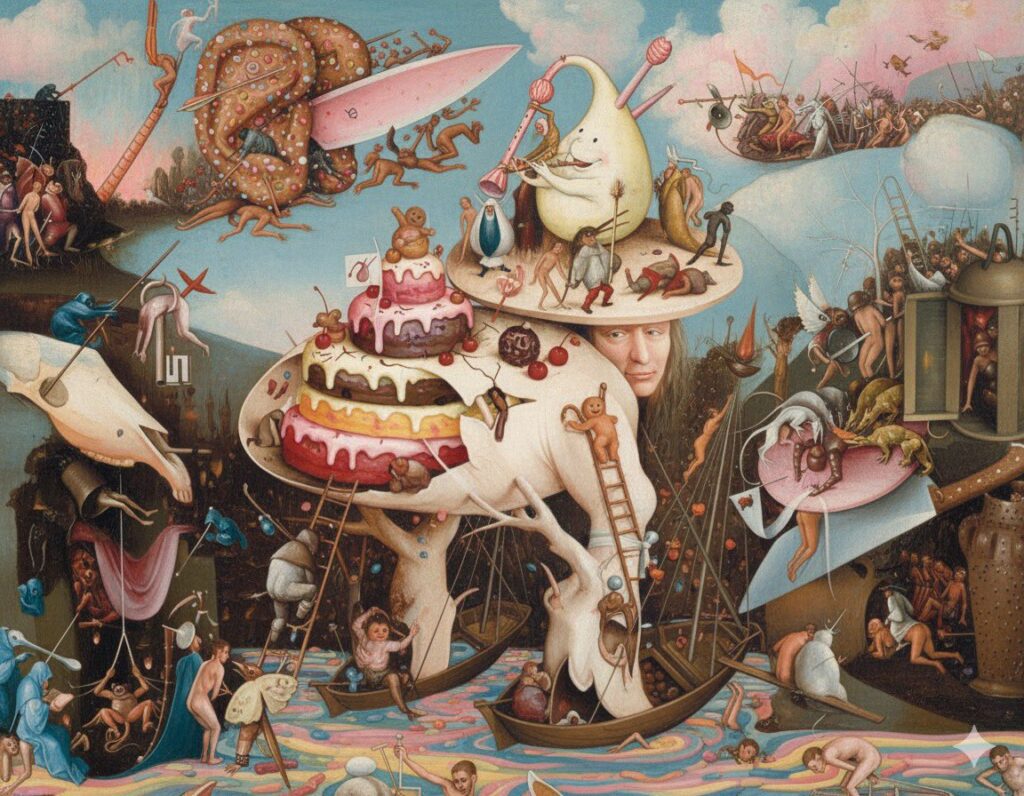

“Ruining” art with Nano Banana

But, y’know, in a fun & cheeky way. 🙂 Check out this little iterative experiment from Ethan Mollick:

Ruining art with Gemini 2.5 Flash. (These are all the prompts, in their entirety)

“make this painting less gloomy”

“it is still pretty disturbing, make it less gloomy emotionally”

“even less gloomy” pic.twitter.com/IYrItsAGFw— Ethan Mollick (@emollick) August 27, 2025

As a longtime Bosch enthusiast, I’m partial to this one:

Reminds me of the time in 2023 (i.e. 10,000 AI years ago) that I forced DALL•E to keep making images look more & more “cheugy”:

And finally;

“Awesome! Can we see post-apocalyptic levels of ‘cheugy’?”

The End. pic.twitter.com/4wgAj5fkYF

— John Nack (@jnack) December 14, 2023

Spin me right ’round, Illustrator

I’m excited to check out this rather eye-popping new Illustrator feature, and I’m installing the beta as I type:

Adobe just released Turntable in Illustrator (beta)

You can now rotate 2D vector art in 3D!

No Redrawing, just drag the slider and done pic.twitter.com/ASciv7casR

— Kris Kashtanova (@icreatelife) August 19, 2025

Another cool example:

Turntable is now available in the Adobe #Illustrator Public Beta Build 29.9.14!!!

A feature that lets you “turn” your 2D artwork to view it from different angles. With just a few steps, you can generate multiple views without redrawing from scratch.

Whether you’re creating… pic.twitter.com/w0ekhbyIlg

— Luke Choice (@velvetspectrum) August 19, 2025

A deep dive into Photoshop’s new Harmonize feature

Jesús Ramirez is a master Photoshop compositor, so it’s especially helpful to see his exploration of some of the new tool’s strengths & weaknesses (e.g. limited resolution)—including ways to work around them.

An homage to classic sign painting

“Sometimes the old ways are the best” — Kincade, Bond’s old groundskeeper in Skyfall

Medieval papercut Ukraine animation

I mean, how would you describe it? No matter what, though, I love the aesthetics:

The 2D waves take me back to this old fave:

My birthday gift: Ditching AI

My family, having seen so many of my AI-powered image generations over the last 3 years, is just utterly inured to them. So, for my MiniMe’s 16th, I sketched up the patriotic little HO-scale engine we’re getting him, along with a cute large ground squirrel (to quote the Dude, “Nice marmot”).

I feel like this is my micro version of when the world revolted against too-perfect Instagram culture, swinging towards Snapchat & stories, where “rough is real,” and flaws are a feature. In any case, my dude was happy as a clam—and that’s all that matters to me.

For my son’s birthday, I ditched AI and broke out my pen. Felt good to work without a net. pic.twitter.com/TJedYCPWMj

— John Nack (@jnack) July 4, 2025

Little Happy AI Trees

“Today we won’t need our paints or our brushes, or our joy.” Oh boy…

“A surrealist design engine no one asked for”

A while back, Sam Harris & Ricky Gervais discussed the impossibility of translating a joke discovered during a dream (“What noise does a monster make?”) back into our consensus waking reality. Like… what?

I get the same vibes watching ChatGPT try to dredge up some model of me and of… humor?… in creating a comic strip based on our interactions. I find it uncanny, inscrutable, and yet consequently charming all at once.

“Hey ChatGPT, based on what you know about me, please create a four-panel comic you think I’d like…” https://t.co/U7WRfShGRh

— John Nack (@jnack) June 4, 2025

GPT-4o infographics: Faraway, so close!

Things are night-and-day better than they were just a month ago (in the dark DALL•E days), but would you like your owl with FEAFERS?

Oh, ChatGPT, you are *almost* good at infographics… But what’s with the EATON and FEAFERS? pic.twitter.com/K7vDRjRsdP

— John Nack (@jnack) April 16, 2025

GPT-4o image creation is coming to Designer!

Having created 200+ images in just the last month via this still-new image model (see new blog category that gathers some of them), I’m delighted to say that my team is working to bring it to Microsoft Designer, Copilot, and beyond. From the boss himself:

5/ Create: This one is fun. Turn a PowerPoint into an explainer video, or generate an image from a prompt in Copilot with just a few clicks.

We’ve also added new features to make Copilot even more personalized to you, plus a redesigned app built for human-agent collaboration. pic.twitter.com/m1oTf53aai

— Satya Nadella (@satyanadella) April 23, 2025

StarVector: Text/Image->SVG Code

Back at Adobe we introduced Firefly text-to-vector creation, but behind the scenes it was really text-to-image-to-tracing. That could be fine, actually, provided that the conversion process did some smart things around segmenting the image, moving objects onto their own layers, filling holes, and then harmoniously vectorizing the results. I’m not sure whether Adobe actually got around to shipping that support.

In any event, StarVector promises actual, direct creation of SVG. The results look simple enough that it hasn’t yet piqued my interest enough to spend my time with it, but I’m glad that folks are trying.

StarVector official app is out on Hugging Face

Generating Scalable Vector Graphics Code from Images and Text pic.twitter.com/4nIr0eHJzG

— AK (@_akhaliq) March 24, 2025

Rive introduces Vector feathering

I really hope that the makers of traditional vector-editing apps are paying attention to rich, modern, GPU-friendly techniques like this one. (If not—and I somewhat cynically expect that it’s not—it won’t be for my lack of trying to put it onto their radar. ¯\_(ツ)_/¯)

Introducing Vector Feathering — a new way to create vector glow and shadow effects. Vector Feathering is a technique we invented at Rive that can soften the edge of vector paths without the typical performance impact of traditional blur effects. (Audio on) pic.twitter.com/39kfjmFsTJ

— Rive (@rive_app) February 11, 2025

Vibe-animating with Magic Animator?

I know only what you see below, but Magic Animator (how was that domain name available?) promises to “Animate your designs in seconds with AI,” which sounds right up my alley, and I’ve signed up for their waitlist.

Figma designs, animated with AI

Magic Animator by @LottielabHQ coming soon pic.twitter.com/qvxxIggT0J

— Daryl Patigas (@darel023) April 14, 2025

Another set of designs animated by AI

Magic Animator waitlist is now open → https://t.co/Gc2N18Kk6X ✨

Breakdown in thread https://t.co/D5pq3u9xTs pic.twitter.com/suwceDd4ED

— Daryl Patigas (@darel023) April 17, 2025

Draw 3D-styled characters with Google Gemini

Check out this fun toy:

[1/8] Drawing → 3D render with Gemini 2.0 image generation… by @dev_valladares + me

Make your own at the link belowhttps://t.co/sy8poJZYuQ pic.twitter.com/DkGT6DRUsb

— Trudy Painter (@trudypainter) April 4, 2025

Apparently I’m over my quota, so sadly the world will never get to see a Ghiblified rendering of my crudely drawn goldendoodle!

ChatGPT reimagines family photos

“Dress Your Family in Corduroy and Denim” — David Sedaris

“Turn your fam into Minecraft & GTA” — Bilawal Sidhu

Entire ComfyUI workflows just became a text prompt.

Open an image in GPT-4o and type “turn us into Roblox / GTA-3 /Minecraft / Studio Ghibli characters” pic.twitter.com/rCXclZklq5

— Bilawal Sidhu (@bilawalsidhu) March 26, 2025

And meanwhile, on the server side:

ChatGPT when another Studio Ghibli request comes in pic.twitter.com/NF5sy24GlU

— Justine Moore (@venturetwins) March 26, 2025

Runway reskins rock

Another day, another set of amazing reinterpretations of reality. Take it away Nathan…

3 tests of Runway’s first frame feature. It’s very impressive and temporally coherent. Input is a video and stylized first frame. ✨

First example here is a city aerial to: circuit board, frost, fire, Swiss cheese, Tokyo. #aivideo #VFX pic.twitter.com/Y7HST74uBy

— Nathan Shipley (@CitizenPlain) March 6, 2025

…and Bilawal:

Playing guitar, reskinned with Runway’s restyle feature — pretty epic for digital character replacement.

I’m genuinely impressed by how well the fretting & strumming hands hold up.

Not perfect yet, but pulling this off would basically be impossible with Viggle or even Wonder… pic.twitter.com/UJBS9c8U1a

— Bilawal Sidhu (@bilawalsidhu) March 7, 2025

Mystic structure reference: Dracarys!

I love seeing the Magnific team’s continued rapid march in delivering identity-preserving reskinning

IT’S FINALLY HERE!

Mystic Structure Reference!

Generate any image controlling structural integrity Infinite use cases! Films, 3D, video games, art, interiors, architecture… From cartoon to real, the opposite, or ANYTHING in between!

Details & 12 tutorials pic.twitter.com/brw4Dx39gz

— Javi Lopez (@javilopen) February 27, 2025

This example makes me wish my boys were, just for a moment, 10 years younger and still up for this kind of father/son play. 🙂

Storyboarding? No clue! But with some toy blocks, my daughter’s wild imagination, and a little help from Magnific Structure Reference, we built a castle attacked by dragons. Her idea coming to life powered up with AI magic.

Just a normal Saturday Morning.

Behold, my daughter’s… pic.twitter.com/52tDZokmIT— Jesus Plaza (@JesusPlazaX) March 8, 2025

Behind the scenes: AI-augmented animation

“Rather than removing them from the process, it actually allowed [the artists] to do a lot more—so a small team can dream a lot bigger.”

Paul Trillo’s been killing it for years (see innumerable previous posts), and now he’s given a peek into how his team has been pushing 2D & 3D forward with the help of custom-trained generative AI:”

Traditional 2d animation meets the bleeding edge of experimental techniques. This is a behind the scenes look at how we at Asteria brought the old and the new together in this throwback animation “A Love Letter to Los Angeles” and collaboration with music artist Cuco and visual… pic.twitter.com/3eWSdgckXn

— Paul Trillo (@paultrillo) March 7, 2025

Charmingly terrible AI-made infographics

A passing YouTube vid made me wonder about the relative strengths of World War II-era bombers, and ChatGPT quickly obliged by making me a great little summary, including a useful table. I figured, however, that it would totally fail at making me a useful infographic from the data—and that it did!

Just for the lulz, I then ran the prompt (“An infographic comparing the Avro Lancaster, Boeing B-17, and Consolidated B-24 Liberator bombers”) through a variety of apps (Ideogram, Flux, Midjourney, and even ol’ Firefly), creating a rogue’s gallery of gibberish & Franken-planes. Check ’em out.

Currently amusing myself with how charmingly bad every AI image generator is at making infographics—each uniquely bizarre! pic.twitter.com/U3cs8ySoVa

— John Nack (@jnack) March 6, 2025

Minimalist mograph in ChatGPT’s Super Bowl spot

I really love the way the visual medium (simply black & white dots) enriches & evolves right alongside its subject matter in this ad for ChatGPT, and I hope we get to hear more soon from the creative team behind it.

Celebrating the skate art of Jim Phillips

If you’re like me, you may well have spent hours of your youth lovingly recreating the iconic designs of pioneering Santa Cruz artist Jim Phillips. My first deck was a Roskopp 6, and I covered countless notebook covers, a leg cast, my bedroom door, and other surfaces with my humble recreations of his work.

That work is showcased in the documentary “Art And Life,” screening on Thursday in Santa Cruz. I hope to be there, and maybe to see you there as well. (To this day I can’t quite get over the fact that “Santa Cruz” is a real place, and that I can actually visit it. Growing up it was like “Timbuktu” or “Shangri-La.” Funny ol’ world.)

SNL goes God mode

“The whole solar system honestly slaps…” -God

This is 100% how all of my younger colleagues’ conversations sound to me. 🙂

Oil painting in Photoshop with AI

Karen X, back doing crafty Karen X things:

AI painting tutorial

Edited on my Intel AI PC – the DELL XPS 13 powered by Intel Core Ultra #ad pic.twitter.com/wcqpR3RhFk

— Karen X. Cheng (@karenxcheng) December 10, 2024

The world’s most laborious stick-figure animation?

Could be—but that’s what makes it fun! Take it away, Stephen:

Celebrating Saul Bass

It’s a real joy to see my 15yo son Henry’s interest in design & photography blossom, and last night he fell asleep perusing the giant book of vintage logos we scored at the Chicago Art Institute. I’m looking forward to acquainting him with the groundbreaking work of Saul Bass & figured we’d start here:

FlipSketch promises text-to-animation

We present FlipSketch, a system that brings back the magic of flip-book animation — just draw your idea and describe how you want it to move! …

Unlike constrained vector animations, our raster frames support dynamic sketch transformations, capturing the expressive freedom of traditional animation. The result is an intuitive system that makes sketch animation as simple as doodling and describing, while maintaining the artistic essence of hand-drawn animation.

Oh, I love this one!

FlipSketch can generate sketch animations from static drawings using text prompts!

Links ⬇️ pic.twitter.com/1XPzkWfaEl

— Dreaming Tulpa (@dreamingtulpa) November 22, 2024

AI fixes (?) The Polar Express

Hmm—”fix” is a strong word for reinterpreting the creative choices & outcomes of an earlier generation of artists, but it’s certainly interesting to see the divisive Christmas movie re-rendered via emerging AI tech (Midjourney Retexturing + Hailuo Minimax). Do you think the results escape the original’s deep uncanny valley? See more discussion here.

Someone fixed Polar Express (Midjourney Retexturing + Hailuo Minimax) pic.twitter.com/6RjrABbAxO

— Angry Tom (@AngryTomtweets) November 12, 2024

Beautiful animated titles for “La Maison”

Happy Friday, y’all.

Bonus: Speaking of French fashion & technology, check out punch-card tech from 200+ years ago! (Side note: the machine lent its name to Google & Levis’ Project Jacquard smart clothing.)

[Both via fashionista/technologist Margot Nack]

Surreal analog creations from Lola Dupre

“I’m so f*ckin’ sick & tired of the Photoshop” — Kendrick Lamar, and possibly Lola Dupre:

[Via Uri Ar]