I’m not fully sure what this rather eye-popping little demo says about how our brains perceive reality, and thus what we can & cannot trust, but dang if it isn’t interesting:

Walking through the new Firefly video editor

Secret Squirrel rides again!

I was so chuffed to text my wife from the Adobe MAX keynote and report that the next-gen video editor she’d kicked off as PM several years ago has now come to the world, at least in partial form, as the new Firefly Video Editor (currently accepting requests for access). Here our pal Dave Werner provides a characteristically charming tour:

Insane Lego La Catrina

“Jesus Christ!!” — my 16yo Lego lover

View this post on Instagram

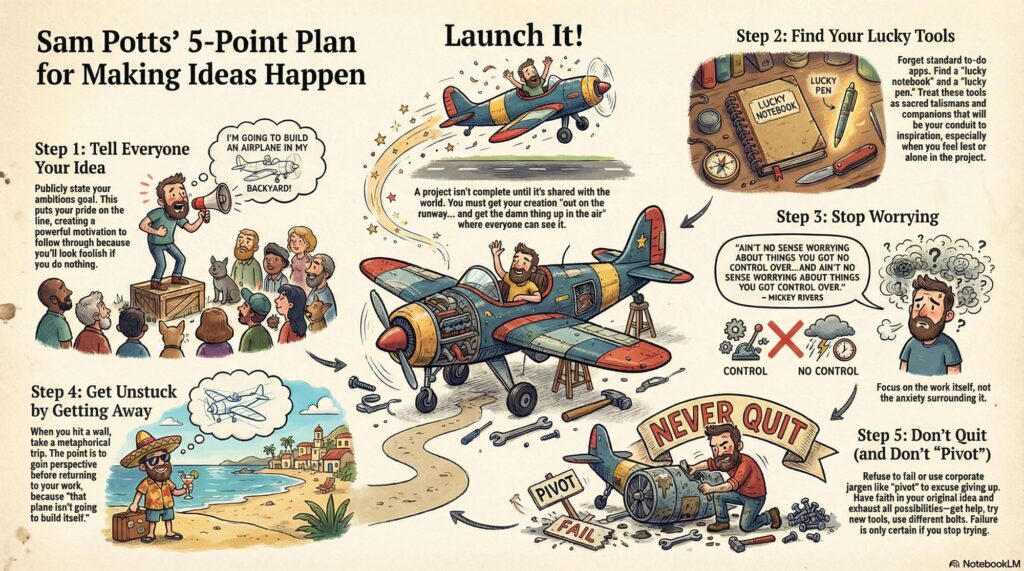

Design: Finding your sacred objects

My old pal Sam is one of the most thoughtful, down-to-earth guys you’re ever likely to meet in the design community, and if you’re looking for a calm but re-energizing way to spend a couple of minutes, I think you’ll really enjoy his seven-minute talk below. I won’t spoil anything, but do trust me. 🙂

And here’s a little visual summary courtesy of NotebookLM:

“How ChatGPT is fueling an existential crisis in education”

I thought this was a pretty interesting & thoughtful conversation. It’s interesting to think about ways to evaluate & reward process (hard work through challenges) and not just product (final projects, tests, etc.). AI obviously enables a lot of skipping the former in pursuit of the latter—but (shocker!) people then don’t build knowhow around solving problems, or even remember (much less feel pride in) the artifacts they produce.

The issues go a lot deeper, to the very philosophy of education itself. So we sat down and talked to a lot of teachers — you’ll hear many of their voices throughout this episode — and we kept hearing one cri du coeur again and again: What are we even doing here? What’s the point?

Links, courtesy of the Verge team:

- A majority of high school students use gen AI for schoolwork | College Board

- About a quarter of teens have used ChatGPT for schoolwork | Pew Research

- Your brain on ChatGPT | MIT Media Lab

- My students think it’s fine to cheat with AI. Maybe they’re on to something. | Vox

- How children understand and learn from conversational AI | McGill University

- File not Found | The Verge

Adobe Research debuts incredibly fast video synthesis

Check out MotionStream, “a streaming (real-time, long-duration) video generation system with motion controls, unlocking new possibilities for interactive content generation.” It’s said to run at 29fps on a single H100 GPU (!).

MotionStream: Real-time, interactive video generation with mouse-based motion control; runs at 29 FPS with 0.4s latency on one H100; uses point tracks to control object/camera motion and enables real-time video editing.https://t.co/fFi9iB9ty7 pic.twitter.com/zKb9u3bj9g

— Wildminder (@wildmindai) November 4, 2025

What I’m really wondering, though, it whether/when/how an interactive interface like this can come to Photoshop & other image-editing environments. I’m not yet sure how the dots connect, but could it be paired with something like this model?

Qwen Image Multiple Angles LoRA is an exquisitely trained LoRA!˚₊‧꒰ა

Keep character and scenes consistent, and flies the camera around! Open source got there! One of the best LoRAs I’ve come across lately pic.twitter.com/1mkmCpXgIY

— apolinario (@multimodalart) November 5, 2025

Chocolate-coated glass shards

Oh man, this parody of the messaging around AI-justified (?) price increases is 100% pitch perfect. (“It’s the corporate music that sends me into a rage.”)

View this post on Instagram

“We Built The Matrix to Train You for What’s Coming”

My friend Bilawal got to sit down with VFX pioneer John Gaeta to discuss “A new language of perception,” Bullet Time, groundbreaking photogrammetry, the coming Big Bang/golden age of storytelling, chasing “a feeling of limitlessness,” and much more.

In this conversation:

Continue reading— How Matrix VFX techniques became the prototypes for AI filmmaking tools, game engines, and AR/VR systems

— How The Matrix team sourced PhD thesis films from university labs to invent new 3D capture techniques

— Why “universal capture” from Matrix 2 & 3 was the precursor to modern volumetric video and 3D avatars

— The Matrix 4 experiments with Unreal Engine that almost launched a transmedia universe based on The Animatrix

— Why dystopian sci-fi becomes infrastructure (and what that means for AI safety)

— Where John is building next: Escape.art and the future of interactive storytelling

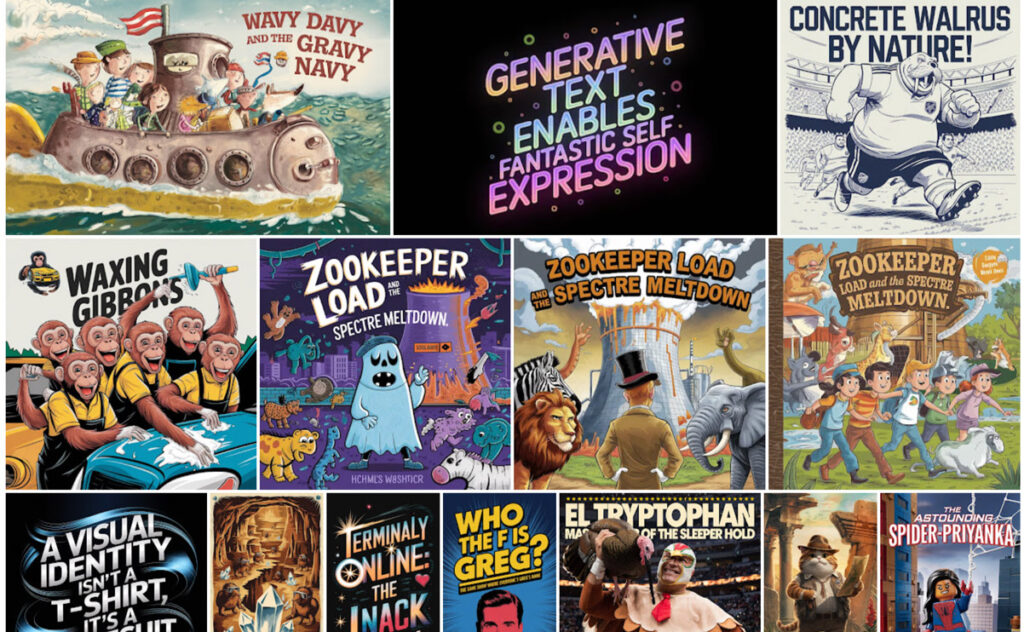

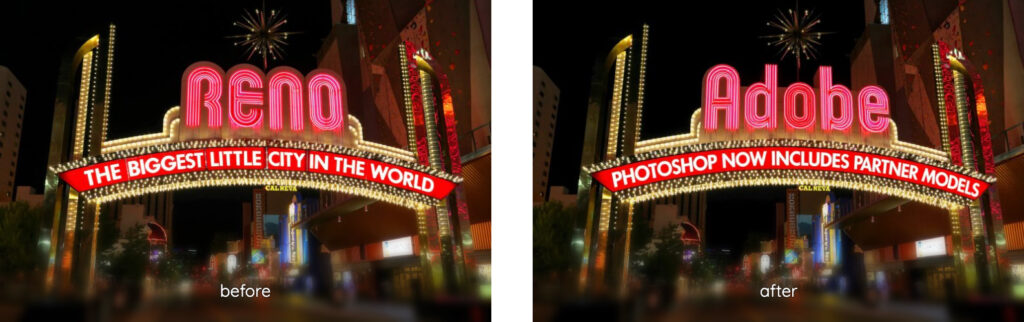

A cool new Photoshop feature (that’s still kinda dumb)

I’m pleased to see that as promised back in May, Photoshop has added a “Dynamic Text” toggle that automatically resizes the size of the letters in each line to produce a visually “packed” look:

Results can be really cool, but because the model has no knowledge of the meaning and importance of each word, they can sometimes look pretty dumb. Here’s my canonical example, which visually emphasizes exactly the wrong thing:

I continue to want to see the best of both worlds, with a layout engine taking into account the meaning & thus visual importance of words—like what my team shipped last year:

I’m absolutely confident that this can be done. I mean, just look at the kind of complex layouts I was knocking out in Ideogram a year ago.

The missing ingredient is just the link between image layouts & editability—provided either by bitmap->native conversion (often hard, but doable in some cases), or by in-place editing (e.g. change “Merry Christmas” to “Happy New Year” on a sign, then regenerate the image using the same style & dimensions)—or both.

Bonus points go to the app & model that enable generation with transparency (for easy compositing), or conversion to vectors—or, again, ¿porque no los dos? 🙂

Demo: Flux vs. Nano Banana inside Photoshop

I recently shared a really helpful video from Jesús Ramirez that showed practical uses for each model inside Photoshop (e.g. text editing via Flux). Now here’s a direct comparison from Colin Smith, highlighting these strengths:

- Flux: Realistic, detailed; doesn’t produce unwanted shifts in regions that should stay unchanged. Tends to maintain more of the original image, such as hair or background elements.

- Nano Banana: Smooth & pleasing (if sometimes a bit “Disney”); good at following complex prompts. May be better at removing objects.

These specific examples are great, but I continue to wish for more standardized evals that would help produce objective measures across models. I’m investigating the state of the art there. More to share soon, I hope!

Emu 3.5 looks seriously impressive

Improvements to imaging continues its breakneck pace, as engines evolve from “simple” text-to-image (which we considered miraculous just three years ago—and which I still kinda do, TBH) to understanding time & space.

Now Emu (see project page, code) can create entire multi-page/image narratives, turn 2D images into 3D worlds, and more. Check it out:

Adobe previews layered image generation

Can’t wait to try this out!

We’ve been thinking a lot about how generative AI can make editing feel faster, smarter, and more intuitive without losing the control creators love.

Today at #AdobeMAX, the #AdobeFirefly team previewed Layered Image Editing: bringing the power of layers and compositing together… pic.twitter.com/VVPtv9hVbK

— Alexandru Costin (@acostin) October 28, 2025

Greetings from Adobe MAX!

I’m down in LA having tons of great conversations around AI and the future of creativity. If you want to chat, please hit me up. firstname dot lastname at gmail.

Nodevember comes early: Runway Workflows

“Nodes, nodes, nodes!” — my exasperated then-10yo coming home from learning Unreal at summer camp 🙂

Love ’em or hate ’em, these UI building blocks seem to be everywhere these days—including in Runway’s new Workflows environment:

Introducing Workflows, a new way to build your own tools inside of Runway.

Now you can create your own custom node-based workflows chaining together multiple models, modalities and intermediary steps for even more control of your generations. Build the Workflows that work for… pic.twitter.com/5VHABPj8et

— Runway (@runwayml) October 21, 2025

Alloy promises PMs on-brand prototyping

Hmm—consider me intrigued:

Alloy is AI Prototyping built for Product Management:

➤ Capture your product from the browser in one click

➤ Chat to build your feature ideas in minutes

➤ Share a link with teammates and customers

➤ 30+ integrations for PM teams: Linear, Notion, Jira Product Discovery, and more

Check out the brief demo:

It’s official – I’m excited to introduce Alloy (@alloy_app), the world’s first tool for prototypes that look exactly like your product.

All year, PMs and designers have struggled with off-brand prototypes – built with “app builder” tools that look nothing like their existing… pic.twitter.com/DztKl2HtQg

— Simon Kubica (@simon_kubica) September 23, 2025

Demo: Specific, practical uses of Flux + Nano Banana inside Photoshop

Twitter (yes, always “Twitter”) can be useful, but a ton of the AI-related posts there are often fairly superficial and/or impractical rehashes of eye candy that garners attention & not much else.

By contrast, Photoshop expert Jesús Ramirez has put together a really solid, nutrient-dense tour—complete with all his prompts—that I think you’ll find immediately useful. Dive on in, or jump directly to one of the topics linked below.

I particularly like this demo of using Flux to modify the text in an image:

“AI: What Could Go Wrong?” Stewart + Hinton”

I really enjoyed Jon Stewart’s super accessible, thoughtful conversation with AI pioneer Geoffrey Hinton. Now I’m constantly going to be thinking about edge detectors, neurons, and beaks!

VSCO Fluxes some generative muscle

I was initially surprised to see VSCO tapping into Flux for generative smarts, but it makes sense: they’re leaning on it to add really good object removal—and not, at least for the moment, to make larger changes. It’ll be interesting to see how their user community responds, and whether they’ll tip some additional toes into these waters (e.g. for creative relighting).

“I’m just a simple Unfrozen Caveman Web designer…”

I had a great time chatting with my fellow former Adobe PM Demian Borba about all things AI (creativity, ethics, ownership, value, and more). You can check out the conversation below, and in case it’s of interest, I used Gemini inside YouTube to create a summary of topics we discussed.

Heralding the AI heralds

Creative Director Alexia Adana constantly explores new expressive tech & writes thoughtfully about her findings. I was kind of charmed to see her deploying the latest tools to form sort of a self-promotional AI herald (below), riding ahead with her tidings:

SNL introduces “ChatGPTío”

“Call your abuela! She is missing you and she has nothing to do.” :-p

“When you use ChatGPT for everything”

Remember those studies showing that using AI makes us dumber? Yeah, about that… 🙂

The Oatmeal: “Let’s talk about AI Art”

This long strip is equal parts heartfelt & hilarious. You should read the whole thing (really, it’s very good), but this bit stuck with me:

As did this sick burn :-p

Flux hackathon provides perspective

The team at BFL is celebrating some of the most interesting, creative uses of the Flux model. Having helped bring the Vanishing Point tool to Photoshop, and always having been interested in building more such tech, this one caught my eye:

Best Overall Winner

Perspective Control using Vanishing Points (jschoormans)

Just like Renaissance artists who start with perspective grids, this Kontext LoRa lets you control the exact perspective point in AI-generated images. pic.twitter.com/phAY41KYdP— Black Forest Labs (@bfl_ml) October 1, 2025

The Daily Show says, “Eat your slop!”

“With just a few clicks and the energy demands of a small European nation, you can create an ass-load of dumb shit with zero meaning!” :-p

Snapseed adds automatic object selection & editing

Back when I worked in Google Research, my teammates developed fast models divide images & video into segments (people, animals, sky, etc.). I’m delighted that they’ve now brought this tech to Snapseed:

The new Object Brush in Snapseed on iOS, accessible in the “Adjust” tool, now lets you edit objects intuitively. It allows you to simply draw a stroke on the object you want to edit and then adjust how you want it to look, separate from the rest of the image.

Check out the team blog post for lots of technical details on how the model was trained.

The underlying model powers a wide range of image editing and manipulation tasks and serves as a foundational technology for intuitive selective editing. It has also been shipped in the new Chromebook Plus 14 to power AI image editing in the Gallery app. Next, we plan to integrate it across more image and creative editing products at Google.

AI “Horseless Carriages”

I was pleasantly surprised to see my old Google Photos manager David Lieb pop up in this brief clip from Y Combinator, where he now works, discussing how the current batch of AI-enabled apps somewhat resembles the original “horseless carriages.” It’s fun to contemplate what’ll come next.

View this post on Instagram

HBoooookay

“A few weeks ago,” writes John Gruber, “designer James Barnard made this TikTok video about what seemed to be a few mistakes in HBO’s logo. He got a bunch of crap from commenters arguing that they weren’t mistakes at all. Then he heard from the designer of the original version of the logo, from the 1970s.”

Check out these surprisingly interesting three minutes of logo design history:

@barnardco “Who. Cares? Unfollowed” This is how a *lot* of people responded to my post about the mistake in the HBO logo. For those that didn’t see it, the H and the B of the logo don’t line up at the top of the official vector version from the website. Not only that, but the original designer @Gerard Huerta700 got in touch! Long story short, we’re all good, and Designerrrs™ community members can watch my interview with Gerard Huerta where we talk about this and his illustrious career! #hbo #typography #logodesign #logo #designtok original sound – James Barnard

How to change your eval ways (baby)

As much as one can be said to enjoy thinking through the details of how to evaluate AI (and it actually can be kinda fun!), I enjoyed this in-depth guide from Hamel Husain & Shreya Shankar.

All year I’ve been focusing pretty intently on how to tease out the details of what makes image creation & editing models “good” (e.g. spelling, human realism, prompt alignment, detail preservation, and more). This talk pops up a level, focusing more on holistic analysis of end-to-end experiences. If you’re doing that kind of work, or even if you just want to better understand the kind of thing that’s super interesting to hiring managers now, I think you’ll find watching this to be time well spent.

Photoshop integrates Flux, Nano Banana

I’m so happy to see Adobe greatly accelerating the pace of 3p API integrations!

FLUX.1 Kontext [Pro] is now in Photoshop!

Starting today, creators worldwide can use FLUX.1 Kontext [Pro] directly inside @Photoshop – no more switching between apps or manually exporting files.

Using FLUX in Generative Fill allows you to edit seamlessly: generating new… pic.twitter.com/9SVDgtGgwy

— Black Forest Labs (@bfl_ml) September 25, 2025

Nano Banana is directly in Photoshop (beta) now

When using Generative Fill select Gemini 2.5 Flash = Nano Banana from the list of models.

The greatest part is you can use it together with Harmonize and other PS features. pic.twitter.com/ncHCU0snil

— Kris Kashtanova (@icreatelife) September 25, 2025

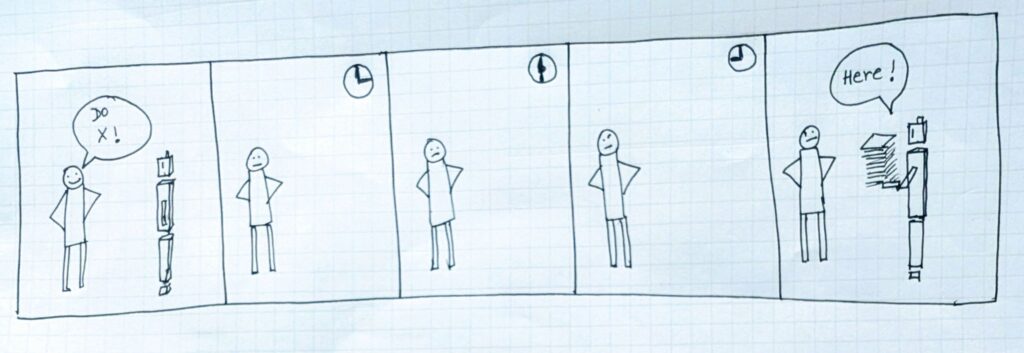

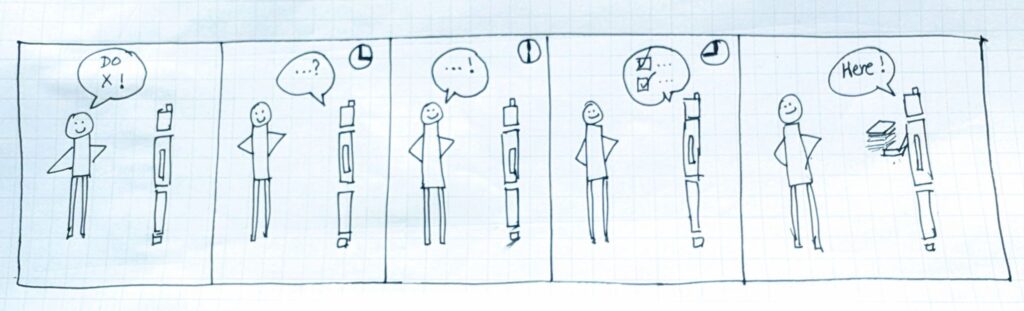

Show your work, AI edition

Microsoft VP Aparna Chennapragada, who recruited me to Microsoft after I reported to her at Google, recently wrote a thoughtful piece about building trust through transparency. Specifically around AI agents, we want less of this…

…and more of this:

I agree completely. Having some thoughtful back-and-forth makes me feel better understood & therefore more confident in my assistant’s work.

And feel here is a big deal. As Maya Angelou said, “People won’t remember what you said, or even what you did, but they’ll remember how you made them *feel*. Microsoft AI leader (and previously DeepMind cofounder) Mustafa Suleyman totally gets this.

Conversely, I just saw a founder advertising his product as “visual storytelling on autopilot.” I get the intent, but I find the phrasing oxymoronic: would any worthwhile “story” be generated by autopilot? Yuck.

When apps try to do too much with my sparse input, seeing the results makes me feel like Neven Mrgan did upon receiving AI-generated slop from a friend: “I was repelled, as if digital anthrax had poured out of the app.” I don’t even want to read such content, much less share it, much less be judged on it.

So yeah, apps: ditch autopilot & instead take the time to show interest & ask good questions. “Slow is smooth, and smooth is fast”—and a little thoughtfulness up front will save me time while increasing my pride of ownership.

Google Flow adds Nano Banana

In addition to adding support for vertical video & greater character consistency, the new Veo-powered storytelling tool now includes direct image creation & manipulation via tiny, tiny fruit:

This new feature… it’s bananas

You can now edit and refine your images directly in Flow using prompts. @NanoBanana maintains the likeness of a subject or scene across different lighting, environments, artistic styles, and more.

See the a-peel for yourself— try it today! pic.twitter.com/vdSvYi0zg3

— FlowbyGoogle (@FlowbyGoogle) September 23, 2025

Google introduces “Learn Your Way”

This paper seems really promising. From textbooks it promises to make:

— Mind maps if you think visually

— Audio lessons with simulated teacher conversations

— Interactive timelines

— Quizzes that change based on where you’re struggling

BREAKING: Google Research just dropped the textbook killer.

Its called “Learn Your Way” and it uses LearnLM to transform any PDF into 5 personalized learning formats. Students using it scored 78% vs 67% on retention tests.

The education revolution is here. pic.twitter.com/pyq1urWYVT

— Artificial Intelligence (AI) • ChatGPT (@chatgptricks) September 22, 2025

More details:

This is going to revolutionize education

Google just launched “Learn Your Way” that basically takes whatever boring chapter you’re supposed to read and rebuilds it around stuff you actually give a damn about.

Like if you’re into basketball and have to learn Newton’s laws,… pic.twitter.com/vDkVgrYXoW

— Alex Prompter (@alex_prompter) September 22, 2025

Vibe Coding at Google: Prototyping the all-new AI Studio

Check out these interesting insights from the former head of design at ElevenLabs, who recently joined Google to help build their AI Studio:

In today’s episode Ammaar Reshi shows exactly how he uses AI to prototype ideas for the new Google AI Studio. He shares his Figma files and two example prototypes (including how he vibe-coded his own version of AI Studio in a couple of days). We also go deep into:

— 4 lessons for vibe-coding like a pro

— When to rely on mockups vs. AI prototypes

— Ammaar’s step-by-step process for prompting

— How Ammaar thinks about the fidelity of his prototypes

— a lot more

BFL’s Flux hackathon kicks off

Prizes include $5,000, an NVIDIA RTX 5090 GPU, and $3K in FAL credits. Check out the site for more info.

FLUX.1 Kontext [dev] Hackathon is live!

$10K+ in prizes, open worldwide. 7 days to experiment and surprise us. Create LoRAs, build workflows, or try something totally unexpected.

Run it locally or through our partners @NVIDIA_AI_PC @fal @huggingface

Registration link below pic.twitter.com/TiOAwfQ5G4

— Black Forest Labs (@bfl_ml) September 18, 2025

BeavisGPT

“Jobs suck! HeHeHeHeHe…” :-p

Let’s Dance: Peacemaker Edition

Apropos of the song featured in my previous post, in case you haven’t already beheld the ludicrous majesty of the Peacemaker Season 2 intros, well, stop cheating yourself!

Better still, here’s a peek behind the scenes of creating this inspired mayhem:

Keep on keeping on

This is me, just vibing the F out. IYKYK.

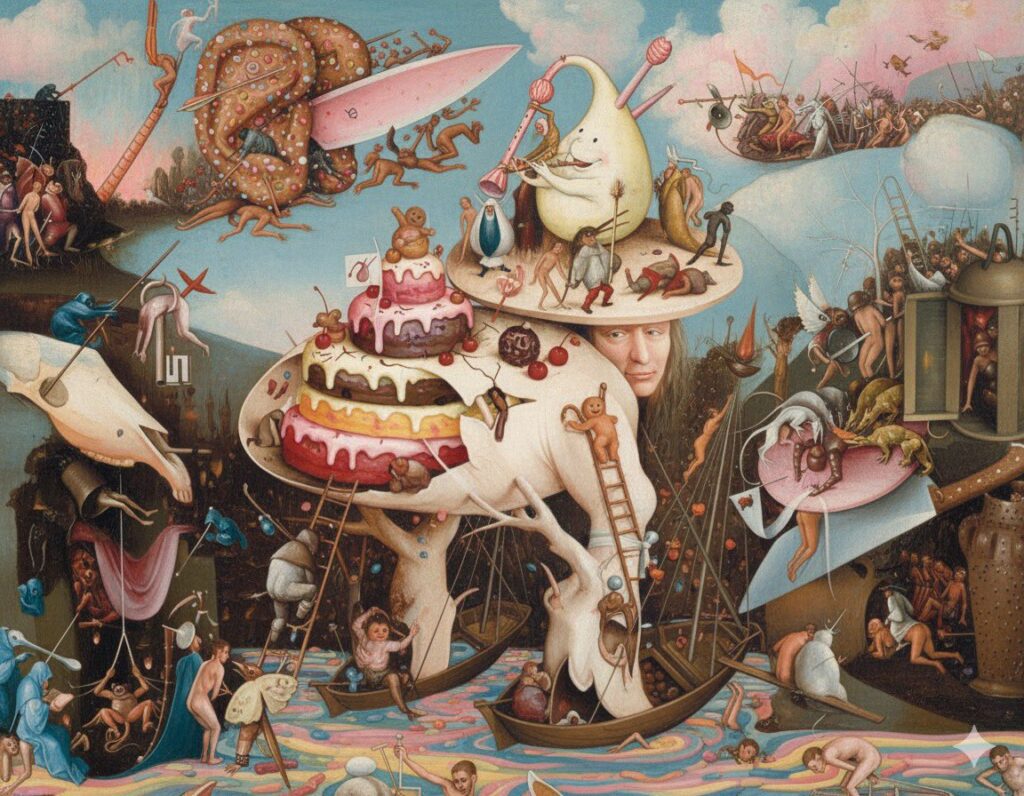

“Ruining” art with Nano Banana

But, y’know, in a fun & cheeky way. 🙂 Check out this little iterative experiment from Ethan Mollick:

Ruining art with Gemini 2.5 Flash. (These are all the prompts, in their entirety)

“make this painting less gloomy”

“it is still pretty disturbing, make it less gloomy emotionally”

“even less gloomy” pic.twitter.com/IYrItsAGFw— Ethan Mollick (@emollick) August 27, 2025

As a longtime Bosch enthusiast, I’m partial to this one:

Reminds me of the time in 2023 (i.e. 10,000 AI years ago) that I forced DALL•E to keep making images look more & more “cheugy”:

And finally;

“Awesome! Can we see post-apocalyptic levels of ‘cheugy’?”

The End. pic.twitter.com/4wgAj5fkYF

— John Nack (@jnack) December 14, 2023

The Phantom Superbad

I never want to get used to just how transformative the latest crop of AI-powered tools has become! Check out just one of the latest examples:

Imagine being able to mod a movie like a video game.

Stumbled upon this Superbad x Star Wars remix and now I want to do this to everything

Creator is jjohncorbett – a professional VFX supervisor experimenting with AI who made this with Runway. pic.twitter.com/uyzsHi8eMB

— Justine Moore (@venturetwins) September 11, 2025

“I’ve got a Nano Banana in my pocket…”

This fun Chrome extension from Glif lets you right-click any image, then edit it via Google’s new model:

Nano Banana is coming to Photoshop—officially!

“Yes, And”: It’s the golden rule of improv comedy, and it’s the title of the paper I wrote & circulated throughout Adobe as soon as DALL•E dropped 3+ years ago: yes, we should make our own great models, and of course we should integrate the best of what the rest of the world is making! I mean, duh, why wouldn’t we??

This stuff can take time, of course (oh, so much time), but here we are: Adobe has announced that Google’s Nano Banana editing model will be coming to a Photoshop beta build near you in the immediate future.

Side note: it’s funny that in order to really upgrade Photoshop, one of the key minds behind Firefly simply needed to quit the company, move to Google, build Nano Banana, and then license it back to Adobe. Funny ol’ world…

SNEAK PEEK! #NanoBanana (Gemini 2.5 Flash Image) is officially coming to #Photoshop this month!! So exciting!! pic.twitter.com/dEd1p9jYQV

— Paul Trani (@paultrani) September 10, 2025

It’s time to peel back a sneak and reveal that Nano Banana (Gemini 2.5 Flash Image) floats into Photoshop this September!

Soon you’ll be able to combine prompt-based edits with the power of Photoshop’s non-destructive tools like selections, layers, masks, and more! pic.twitter.com/CSLgJYVsHo

— Howard Pinsky (@Pinsky) September 10, 2025

Beautiful new AI mograph explorations

Check out this new work from Alex Patrascu. As generative video tools continue to improve in power & precision, what’ll be the role of traditional apps like After Effects? ¯\_(ツ)_/¯

Liquid Logos

With the right prompt, anything is possible with AI video today.

Images: OpenAI Sora

Animation: Kling 2.1

Editing: CapCut pic.twitter.com/yOgDEwahCo— Alex Patrascu (@maxescu) September 5, 2025

Quick tip:

If you want to utilize the full power of Start/End frames (Kling or Hailuo), you can make the first frame empty with tour desired color and describe what happens until the last frame is revealed.

Super powerful: pic.twitter.com/xTaQsB06Kt

— Alex Patrascu (@maxescu) September 7, 2025

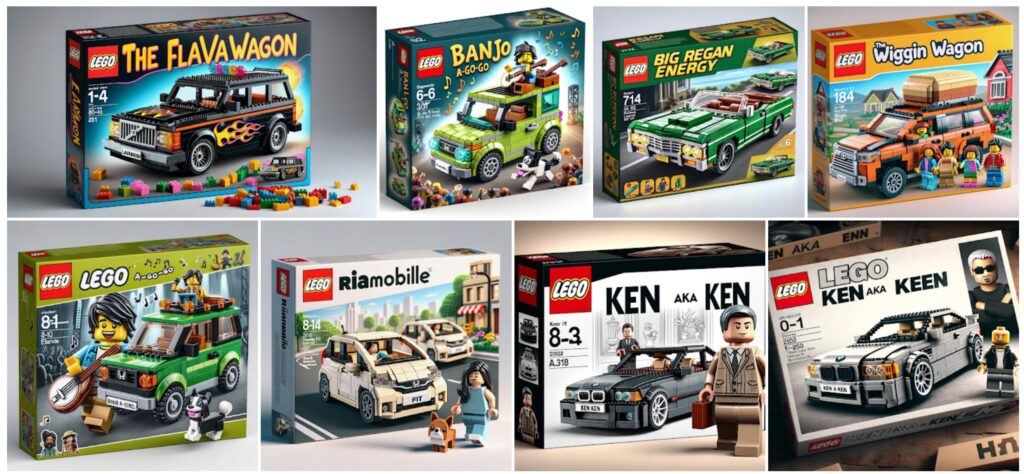

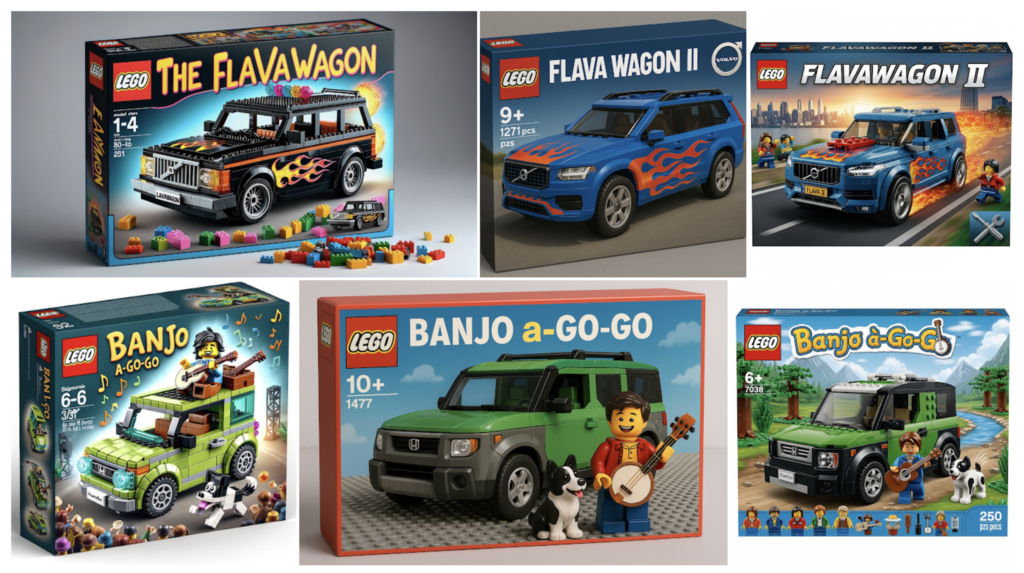

AI Lego Redux

Back when DALL•E 3 launched (not even two years ago, though in AI time it feels like a million), I used it to delight friends by rendering them & their signature vehicles in Lego form.

Now that Google’s Nano Banana model has dropped, I felt like revisiting the challenge, comparing results to the original plus ones from ChatGPT 4o.

As you can see in the results, 4o increases realism relative to DALL•E, but it loses a lot of expressiveness & soul. Nano Banana manages to deliver the best of both worlds.

Great demos of Nano Banana

Day-to-night, changes of perspective, kids becoming Legos, and more: check out this quick tour of some of the amazing possibilities:

Nano Banana comes to Photoshop

Rob de Winter is back at it, mixing in Google’s new model alongside Flux Kontext.

Rob notes,

From my experiments so far:

• Gemini shines at easy conversational prompting, character consistency, color accuracy, understanding reference images

• Flux Kontext wins at relighting, blending, and atmosphere consistency

Barber, gimme the “Kling-Nano Banana…”

And yes, I do feel like I’m having a stroke when I type our actual phrases like that. 🙂 But putting that aside, check out the hairstyling magic that can come from pairing Google’s latest image-editing model with an image-to-video system:

Want to try a new haircut? Check out this AI workflow:

1. upload a selfie & prompt your desired haircut

2. uses Nano Banana to generate your haircut

3. then Kling 2.1 morphs from old you to new you

4. Claude helping behind the scenes with all the promptslink to glif below pic.twitter.com/9QO2EArOsu

— fabian (@fabianstelzer) August 29, 2025

“Sorry, you’ve gotta have the AI”

Feeling seen/attacked. :-p

“The hidden design of the Apple AirPod”

Oddly enough, my son was just asking me about how the tiny batteries in these things work. Check out this surprisingly accessible & detailed peek inside.

Should movies look “fake”?

“Where does the light come from?”

“The same place as the music.”

— Andrew Lesnie, Lord of the Rings cinematographer

why movies should look fake pic.twitter.com/NlKoegORPo

— patrick. (@imPatrickT) August 23, 2025